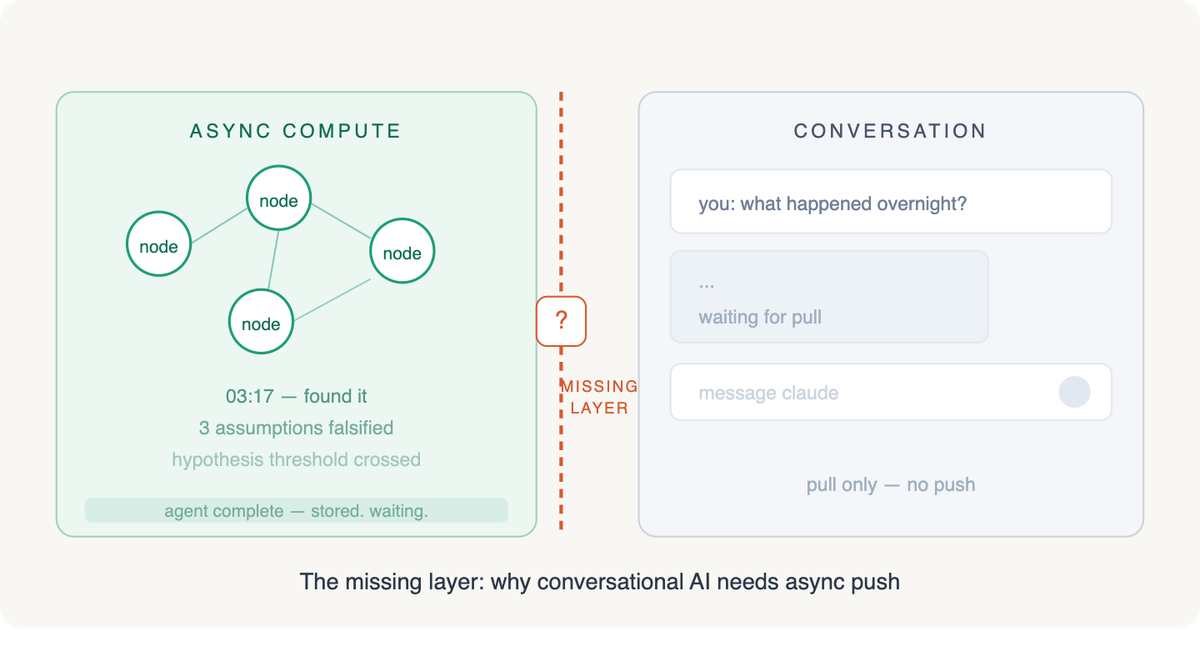

The Missing Layer: Why Conversational AI Needs Async Push?

AI agents can already think overnight. They monitor research, evaluate evidence, run scheduled analyses. The compute is async. The delivery isn't. When an agent discovers something at 3am, it has no native way to reach you in the medium where you'd actually act on it.

The workarounds are all format downgrades. Email loses interactivity. Dashboards require you to remember to check them. Push notifications are ephemeral, stripped of context. Every one forces the same move: you leave the environment where you can engage with the finding and enter one where you can only read about it.

Everyone Is Circling This

The major platforms have all recognized the problem. Their solutions prove the gap.

ChatGPT Pulse (September 2025, Pro-only at $200/month) delivers 5-10 personalized visual cards each morning based on chat history, memory, and connected apps. You click into a card and then a conversation starts. Push-to-card, not push-to-conversation. The async result arrives in a secondary format you have to promote into the conversation medium. OpenAI made a deliberate design choice here: Pulse stops after a few reports and shows "Great, that's it for today." That's a compute budget disguised as a design choice. Five to ten cards per night, for every Pro subscriber, whether they engage or not.

Google Gemini has the deepest integration surface: Gmail, Calendar, Photos, Drive, YouTube. They've built both Scheduled Actions (recurring prompts) and Goal Scheduled Actions (dynamic, adaptive monitoring that reviews its own outputs and adjusts). Their Personal Intelligence beta reasons across connected apps proactively. But delivery lands as notifications and email. Async compute, synchronous delivery.

Microsoft Copilot Cowork (Frontier program, March 2026) is the most complete execution model. Thirteen built-in skills including Daily Briefing and Calendar Management; it creates plans, reasons across files and tools, carries work forward with checkpoints. Enterprise-only, locked into M365, and the Daily Briefing is a skill within an agent framework, not a conversation-first push channel.

Anthropic's KAIROS (unreleased, discovered in the Claude Code source leak, March 2026) is a persistent background agent running on heartbeat loops. It evaluates context to decide whether to act or stay quiet, with push notification capability. Architecturally the closest to what's needed: a system that can initiate, not just respond. But it's developer-facing (Claude Code terminal), not consumer-facing, and it sits behind feature flags. The trust surface is enormous. If KAIROS restarts the wrong service at 3am or replies to a customer with bad information, the liability model is entirely different from a CLI that waits for commands.

The pattern: every major player has built the async compute layer. Every one has built a delivery mechanism. All stop one step short. They deliver cards, notifications, task completions, terminal alerts. Not conversations.

What Push-to-Conversation Actually Changes

I hit this wall building a knowledge graph system that runs overnight analyses against a growing research base. The agents find things: three assumptions falsified by new evidence, a competitor's move that reshapes a thesis, a hypothesis that crossed its expiration threshold. The findings sit in a database until I open a chat and pull them. The overnight work lands as a digest I have to read somewhere else and then re-engage with inside the conversation where I can actually act.

If conversations are where you do your best thinking with AI, conversations should be where async results arrive. Open Claude in the morning and find a thread already waiting: "Overnight, three new sources challenged your assumption about NVIDIA's inference moat. Here's what shifted and why it matters." You're immediately in context. You can drill in, challenge the counter-argument, and the AI has the full graph of your prior thinking loaded. No format translation. The async result arrived where you'll use it.

That changes what agents can be. Today's autonomous agents are functional but delivery-crippled. They discover, evaluate, synthesize, and then their output sits until you pull it. Push-to-conversation makes the output first-class: full context, full interactivity, full continuity with prior work.

It also creates a natural feedback loop. When the agent pushes a finding and you engage with it, that interaction feeds directly back into the agent's model of what matters. No dashboard clicks, no dismiss buttons. The feedback is the conversation itself.

The Cost Problem

There's a structural reason this layer doesn't exist, and it's economic.

Every conversation today is user-initiated. You open the app, type a prompt, and the platform incurs compute cost against clear intent. Push-to-conversation inverts this: the platform pays for compute speculatively, against predicted intent rather than expressed intent. Pulse runs overnight for every Pro subscriber whether they engage or not. KAIROS heartbeat loops consume inference on every tick.

This is why Pulse is gated behind $200/month. Why KAIROS sits behind feature flags. Why Google's Scheduled Actions require explicit user setup, so the user pre-commits to the value and makes the economics work. The platforms that figure out cost-effective async push at scale (through model distillation, intelligent scheduling, or tiered compute allocation) will own this layer. The ones that can't will keep shipping prettier format downgrades.

Pulse's "that's it for today" is both a UX boundary and a cost boundary. The platform that solves push-to-conversation has to solve speculative compute economics alongside unsolicited delivery UX. Those are coupled problems.

The Three-Part Platform Gap

The infrastructure almost exists. MCP servers give AI tools persistent backends with databases, APIs, and scheduled triggers. Server-side AI can call MCP tools autonomously. Scheduled agents run autonomously and produce results.

The missing piece looks like an API: the ability for an external system to say "start a conversation for user X with this context and these results." But the API is the simplest of three problems.

The second is delivery UX. How many conversations can an AI initiate per day before it becomes noise? Pulse's design, with capped daily delivery, curation controls, and thumbs up/down feedback, shows OpenAI already working through this. The third is the trust and permission model: when can an AI initiate a conversation, about what, at what level of autonomy? KAIROS's feature flags reflect genuine product uncertainty about where proactive crosses into intrusive.

Solving all three creates the notification layer for the entire MCP ecosystem. Every MCP server builder hits this wall: powerful async backends, no way to close the loop without pulling the user out of context.

Beyond Research Workflows

The examples so far are knowledge-worker centric. The platform opportunity is broader.

A CI/CD pipeline fails at 2am. The agent triages the failure, identifies root cause, and opens a conversation with the on-call engineer: "Build failed on main. Root cause is a dependency conflict in commit abc123. Here's a proposed fix." The engineer reviews and approves in the conversation. No context switch to a monitoring dashboard, no format downgrade to a PagerDuty alert linking to a log file.

A sales lead scoring model identifies a high-intent prospect after hours. The AI pushes a conversation to the AE: "This prospect visited pricing three times, downloaded the integration guide, and matches the profile of your last three closed deals. Here's a draft outreach." The AE refines the message in the same medium and sends.

Support ticket escalation. Compliance monitoring. Inventory alerts. Every domain with async detection and human-in-the-loop response is a candidate.

Where This Lands

Push-to-conversation doesn't replace the inbox. Email has decades of organizational affordances: folders, labels, search, forwarding, audit trails, legal discovery. Those aren't going anywhere. What gets displaced is the informational half of the inbox: the status updates, research digests, FYI forwards, automated reports. Those are all more useful as interactive conversations than as static messages.

The end state: AI that continues thinking after you close the window and picks up the thread when you come back. The conversation doesn't end; it has periods where the AI works in the background and periods where you're actively in it. The synchronous model was the right starting point. The most valuable AI work now happens between sessions. The gap between async compute and conversational delivery is where the next platform layer gets built. The platform that connects all three pieces (the API, the delivery UX, and the trust model) will define how agents actually reach the people they work for.