Why Every AI Lab Shipped a Coding App This Quarter

In November 2025, Google shipped Antigravity, a full IDE built around the Gemini 3 model. By January 2026, Anthropic had extended Claude Code's agentic capabilities into its desktop app. On February 2, 2026, OpenAI released the Codex desktop app for macOS. Three frontier labs, three consumer-facing coding applications, three months. This isn't a coincidence and it isn't about making coding easier. It's a natural move toward owning the developer workflow, and if you're building a product that sits between a model provider and a developer, the implications are worth sitting with.

I've been building MCP servers and working with these orchestration layers for months now, and the pattern I'm seeing isn't just competition. It's vertical integration. The labs aren't adding coding features to attract developers. They're integrating forward into the workflow because that's where the value accrues. And the implications extend well beyond coding tools.

The Numbers Behind the Move

Anthropic's Claude Code reached an estimated $1B annualized run-rate within about six months of its broader launch, a signal that reshaped strategy across the industry. OpenAI responded by bundling Codex with ChatGPT Plus, Pro, Business, and Enterprise subscriptions. Google offered Antigravity as a free public preview with rate-limited Gemini 3 usage. The pricing message is clear: coding agents are becoming table stakes for AI subscriptions, not standalone products.

Enterprise AI spending provides the strategic context. The a16z enterprise AI survey from January 2026 reported that average LLM spend reached $7 million in 2025, nearly tripling from $2.5 million in 2024, with projections suggesting continued 50-65% year-over-year growth. The labs aren't building desktop apps because they love IDEs. They're building them because whoever owns the interface where work happens is best positioned to retain the spend.

The investment case goes deeper than revenue. Both OpenAI and Anthropic are using their coding agents deeply in their own R&D and product engineering. OpenAI explicitly describes GPT-5.3-Codex as having helped build itself; Anthropic says Claude Code was heavily used to build Cowork. When a tool accelerates the creation of the next tool, it becomes an R&D flywheel. That changes the math on how much a lab is willing to invest in making these tools free.

What Each Lab Actually Shipped

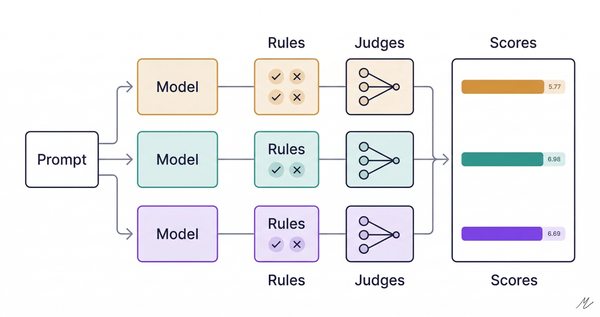

The approaches reveal each lab's theory of how developers will work. OpenAI's Codex is built around parallelism: multi-agent workflows tackling different parts of a codebase simultaneously in isolated Git worktrees, with a Skills system extending into design, deployment, and testing. Independent analysis suggests Codex is significantly more token-efficient than Claude Code, trading some accuracy on complex problems for speed and cost. Anthropic's Claude Code takes the opposite approach, optimized for getting things right the first time. Independent benchmarks through 2025 often showed Claude ahead of Codex on SWE-bench Verified, though recent results put them roughly tied on core metrics, with Codex now leading on the harder SWE-bench Pro variant. The expanding footprint tells you where Anthropic is heading: terminal, web, desktop, Slack, and now Cowork for non-technical knowledge work. Coding is the wedge. The real play is becoming the agent layer for all professional work. Google's Antigravity is architecturally the most ambitious, combining an editor, agent orchestration, and browser-based automated testing. In practice, user reports have been mixed: quality consistency, quota management, and model availability remain recurring complaints.

The differences matter less than the convergence. All three labs are moving from selling model access to owning the environment where work happens.

The GitHub Integration Changes the Game

In early February, GitHub integrated both Claude and Codex as partner agents inside GitHub Copilot, available to Pro+ and Enterprise users. This matters more for independent tools than any desktop app launch.

GitHub is where the code already lives. It's where pull requests happen, where CI/CD runs, where teams collaborate. If Claude and Codex work as agents inside that environment, the core value proposition of a third-party coding tool shifts. The pitch used to be: "We integrate AI into your development workflow better than anyone." Now the workflow platform itself offers the same agents, from the same labs, at no additional cost.

For Cursor specifically, this creates an uncomfortable dynamic. Cursor is a VS Code fork that built its differentiation on superior AI orchestration: a RAG-like system for local filesystem context, model-agnostic flexibility across Claude, GPT, and Gemini, and features like Composer mode for multi-file changes. In independent benchmarks, Cursor has performed competitively with and sometimes outperformed the labs' own tools, even using the same underlying models. The orchestration layer clearly adds value. But when the models themselves are available inside GitHub, the orchestration advantage becomes harder to sustain. And Cursor's paying user base, while meaningful, faces a structural challenge when labs can bundle coding capabilities with subscriptions serving millions.

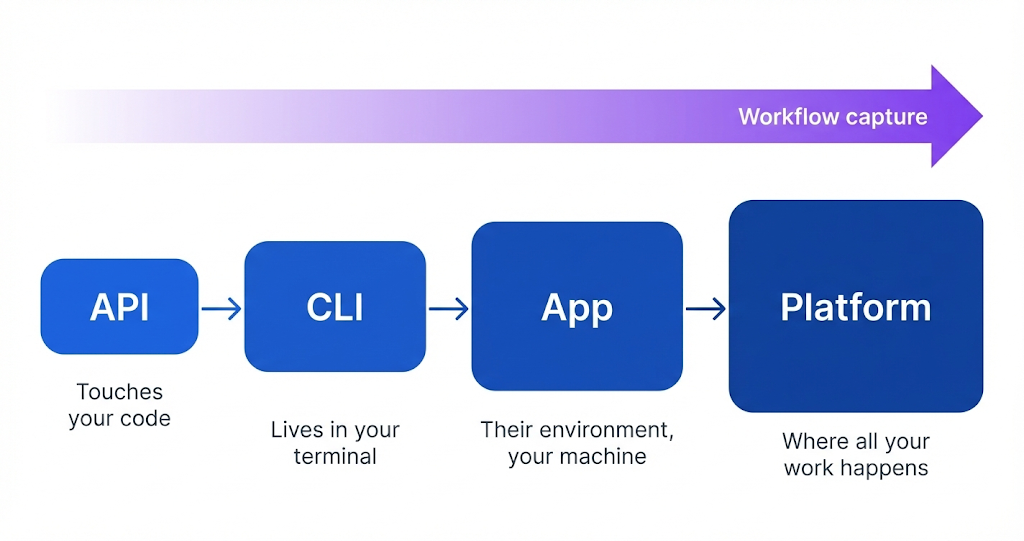

The Deeper Pattern: API to CLI to App to Platform

What's happening in coding tools follows a pattern playing out across AI products. The sequence goes: API (developers integrate your model), CLI (developers use your model directly), desktop app (developers work inside your environment), platform (you own the workflow end to end).

Each step captures more of the user's workflow. An API call touches your code. A CLI lives in your terminal. A desktop app puts the lab's environment on your machine. A platform makes that environment the place where all your work happens. The labs are moving as fast as they can from left to right because the further right you go, the harder it is for users to leave.

Anthropic's Cowork is the tell. It extends Claude Code's agentic capabilities into the desktop app for non-technical work: legal memos, marketing copy, data visualization, document management. Coding is just the first wedge. The real product is an AI work agent that lives on your desktop and handles whatever you throw at it.

Where This Gets Complicated for Independent Tools

Cursor has real advantages that won't evaporate overnight. Model agnosticism matters when labs release models at different quality levels for different tasks. No migration requirement matters when developers have years of workflow investment in VS Code extensions and configurations. And Cursor's orchestration layer genuinely performs well.

But the structural forces are moving against independent tools. Model access asymmetry is the biggest one: labs can optimize their own tools with unreleased models, early access to capabilities, and special tuning that third parties can't match. When Anthropic releases a new model, Claude Code gets it first. Cursor gets it when the API is ready. Pricing pressure compounds the problem. Cursor charges a standalone subscription fee. OpenAI includes Codex with ChatGPT Plus, which also gives you the latest GPT models, image generation, voice, and everything else. That's a difficult value comparison for any standalone tool. The consolidation signals are already visible. Windsurf has gone through a messy period of failed acquisition talks and leadership departures, and is less prominent than in early 2025, though the IDE is still being updated. The competitive field is narrowing.

The Viability Question

If I'm advising a product leader evaluating this space, I'd frame the question differently than most analysts do. The question isn't "Will AI coding tools succeed?" They clearly will. Enterprise spending confirms it. The question is "Can an independent AI coding tool build a durable business when the model providers compete directly with their own customers?"

The honest answer is that it depends on how quickly the labs close the orchestration gap. Right now, tools like Cursor demonstrably add value above and beyond what the labs ship natively. That gap earned Cursor its market position. But the gap is narrowing with every release.

Short-term, through 2026, independent tools remain viable because of established workflows, model flexibility, and the friction of switching environments. Medium-term, into 2027 and beyond, the pressure intensifies as GitHub integration deepens and lab-native tools improve. The market likely consolidates around two or three platforms: GitHub as the Microsoft ecosystem play, one or two lab-specific tools that own their model ecosystems, and possibly one model-agnostic survivor. Cursor is the strongest candidate for that last slot. But "strongest candidate to be the one independent survivor" is a very different investment thesis than the one that launched the company.

What This Means Beyond Coding

That's the part I keep turning over. The consumerization of coding is a leading indicator, not an isolated event. Whatever happens to independent coding tools will preview what happens to independent AI tools in every other vertical: writing, design, data analysis, customer support, legal work.

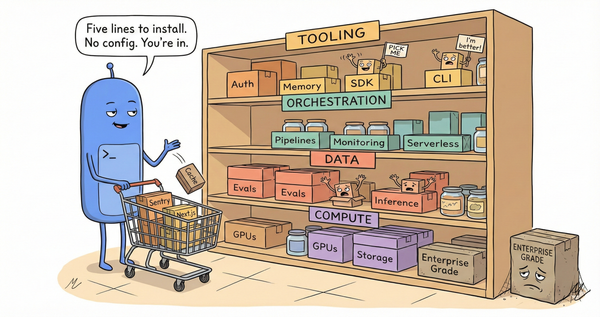

The pattern is the same everywhere. Labs provide the models. Startups build orchestration layers and user interfaces on top. Then the labs ship their own interfaces that bundle the model with the workflow, and the startups face the classic platform risk question: can I deliver enough value in the orchestration layer to survive when the platform competes with me?

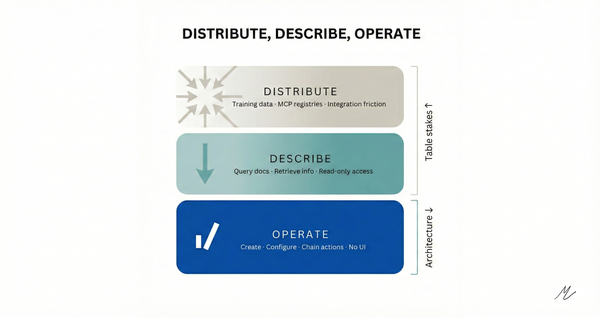

Having built MCP servers across different domains, I can say the orchestration layer is real and nontrivial. The work of connecting models to tools, managing context, handling errors gracefully, and maintaining state across complex workflows is genuinely hard. But it's also reproducible. The same architectural patterns apply across domains, which means any lab with enough engineering resources can replicate what independent tools build. The question is whether they will, and the coding tools market is answering that clearly.

For product leaders evaluating AI investments, the lesson is structural. Before committing to any AI tool that sits between you and a model provider, it's worth asking: what happens when the provider ships this capability natively? If the answer depends entirely on the orchestration gap staying open, the tool may be a bridge, not a destination.