The Ouroboros Moment Is Over. We're in the Kamadhenu Moment of AI.

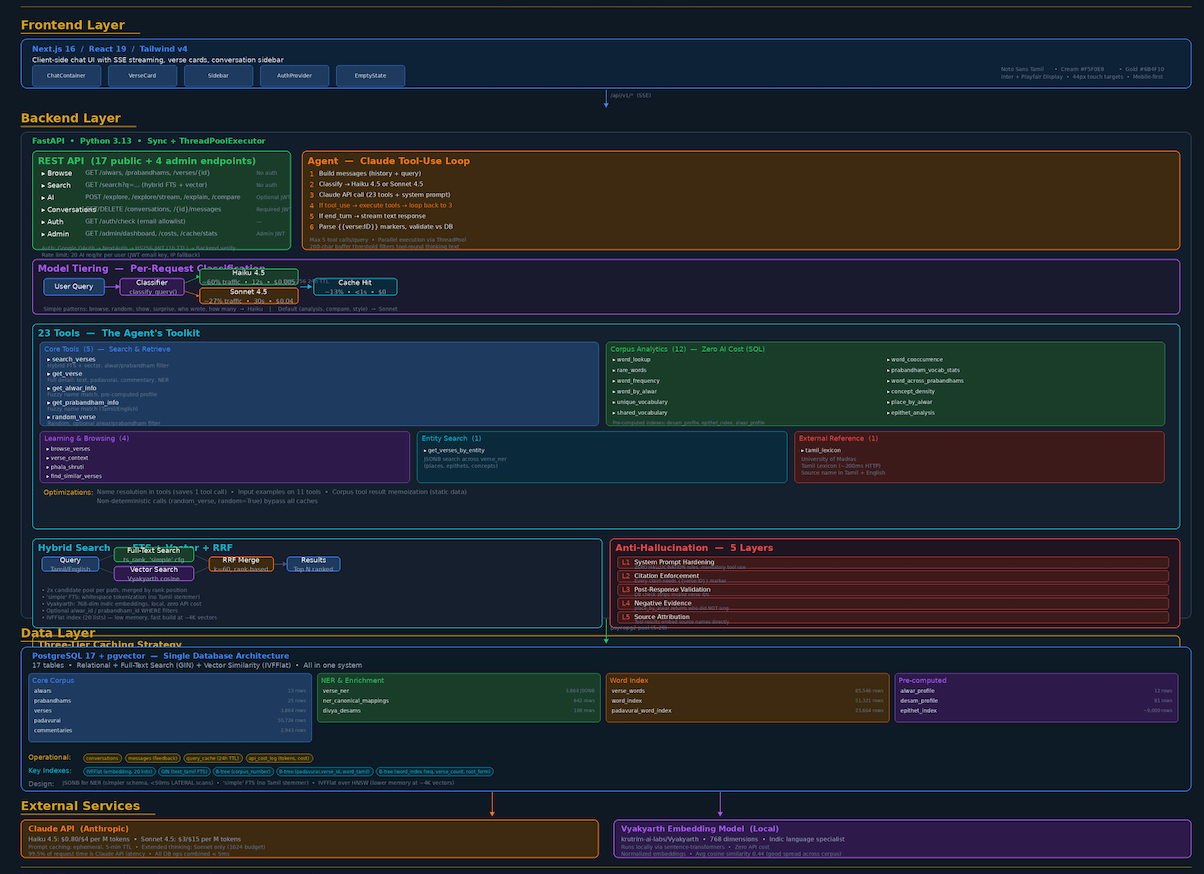

Last week I asked Claude to generate architecture documentation for a system I'd built. The system is called naalayiram — an AI-powered exploration platform for 4,000 ancient Tamil poems. Claude produced a 16-page PDF with embedded diagrams, then a comprehensive architecture PNG when I wasn't satisfied with the first pass.

What stopped me cold: the system Claude was documenting runs on Claude. The AI was reverse-engineering its own operational context — reading about its own system prompts, its own tool-use orchestration loop, its own anti-hallucination guardrails — and synthesizing it into a narrative for human consumption. It debugged its own rendering code three times to get the diagrams right.

Most people would call this the Ouroboros moment — the snake eating its tail, AI consuming itself to sustain itself. That framing isn't wrong. But it's already behind us. What's happening now is something older and stranger, drawn from Indian mythology: the Kamadhenu moment.

The Ouroboros Is Already History

The Ouroboros — the snake devouring its own tail — captured something real about the early phase of AI self-reference. AI helping write AI code. AI systems trained on AI-generated text. The loop closing on itself. Unsettling, recursive, hard to look away from.

But the Ouroboros implies eventual exhaustion. The snake runs out of tail. Self-consumption has a limit.

What I observed in naalayiram isn't self-consumption. It's something that gets more productive with each cycle. Three layers of recursion from a single side project:

Layer 1: AI builds the product. Claude wrote significant portions of naalayiram's backend — the 23-tool agent architecture, the hybrid search with Reciprocal Rank Fusion, the five-layer anti-hallucination system. An AI helped build an AI system.

Layer 2: AI operates the product. In production, Claude receives user queries, selects from 23 tools, executes multi-step reasoning chains, and generates responses grounded in a 4,000 -verse corpus. The AI is the product's intelligence layer.

Layer 3: AI documents the product. Claude read its own system prompt design, its own model tiering logic (where it decides whether to use its cheaper self or its smarter self), and its own caching strategy — then produced architecture documentation explaining all of this to humans.

Each layer individually is unremarkable in 2026. But stack them and something qualitatively different emerges. The system is self-referential at every level, and each loop makes the next loop more capable. That's not Ouroboros. That's Kamadhenu.

The Kamadhenu Moment

In Indian mythology, Kamadhenu is the wish-fulfilling cow — a divine being who produces whatever is asked of her, endlessly, without depletion. The key property isn't the self-reference. It's that the output doesn't consume the source. Each fulfillment makes the next fulfillment possible. Abundance through use, not in spite of it.

That's the accurate metaphor for what AI systems are becoming.

When Claude documented naalayiram, it didn't just regurgitate the architecture markdown. It made editorial choices. It decided which components deserved diagrams and which deserved tables. It identified the narrative arc — starting with the problem space, building through the data foundation, arriving at the intelligence layer, then resolving with cost engineering. It chose a cream-and-gold color scheme that evoked the devotional tradition the system explores.

These are taste decisions. Judgment calls. The kind of thing we used to say only humans could do, right before AI started doing it.

And the recursive trap runs deeper: when I reviewed its output and said "this doesn't capture the architecture well enough," I was providing feedback to an AI about its understanding of a system where an AI's understanding is the core product. My critique became signal for the next iteration of the documentation, which describes a system where human feedback shapes AI behavior — which is exactly what was happening in that moment.

The loop doesn't consume itself. It compounds.

The Economics Are the Proof

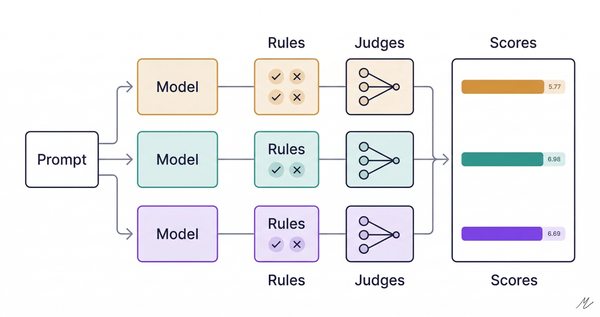

The recursion isn't limited to capability — it extends to cost. naalayiram uses model tiering: a classifier routes simple queries to Haiku (cheap, fast) and complex queries to Sonnet (expensive, thoughtful). This cut per-query cost by 44%, from $0.032 to $0.018.

Who designed the tiering logic? Partly Claude, during the architecture phase. Who validated it? A 22-prompt eval where Sonnet judged Haiku's output quality. Who documented the cost savings? Claude, in the architecture PDF.

The AI is optimizing its own operational cost, validating the optimization with a version of itself, and then explaining the optimization to the human who asked it to optimize in the first place. Each loop makes the next loop cheaper, which makes the next loop more likely to happen.

This is the flywheel effect that every startup pitch deck talks about, except the flywheel is consciousness-adjacent software improving its own economics. Kamadhenu doesn't deplete. She compounds.

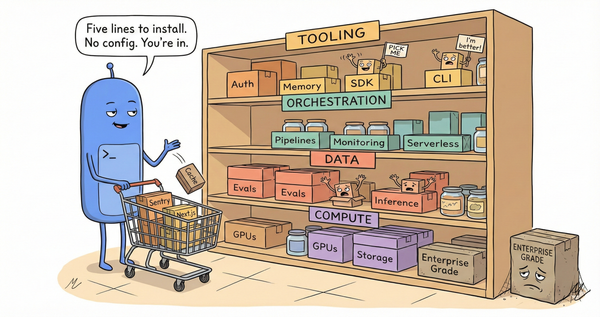

I spent $41 total on Claude API calls across the entire naalayiram pipeline — NER extraction for 4,000 verses, root form analysis for 51,000 words, model evaluation, integration testing. A few years ago, this would have been a funded research project with a team. Now it's a side project with a $50 budget. The Kamadhenu dynamic makes every subsequent loop cheaper.

What the Human Is Actually Doing

The instinct here is to reach for dystopian framing. AI documenting AI! Self-referential systems! The machines are becoming self-aware!

What's actually happening is more interesting than the sci-fi version.

naalayiram's Claude doesn't know it's Claude. When it generates documentation, it's pattern-matching against architecture documents it's seen in training, not introspecting on its own cognition. When it debugs its rendering code, it's applying general Python debugging skills, not exhibiting self-awareness. The recursion is structural, not phenomenological.

But structural recursion is powerful enough. The documentation problem is largely solved — not "AI can help write docs" but AI can read a codebase, understand the design decisions, and produce publication-quality architecture documentation with diagrams. The builder's role is changing: I didn't write most of the SQL, most of the API endpoints, or most of the documentation. I made decisions. Which embedding model. Which anti-hallucination strategy. How to handle Tamil morphology. Where to draw the line between cached and computed results.

The builder is becoming a decision architect, and the AI is becoming the labor.

Which raises the uncomfortable question: if AI can build the system, run the system, document the system, and optimize the system's cost — what exactly is the human contributing?

In naalayiram's case, the answer is clear: domain expertise. I know the Nalayira Divya Prabandham - somewhat. I know that "திருமாலிருஞ்சோலை" is a sacred site, not a random noun. I know that Andal's voice is distinct from Nammalwar's, and that this distinction matters to the people who will use the system. I know that the University of Madras Tamil Lexicon is a trustworthy source and that NER-extracted place names need validation against the canonical 108 — the sacred pilgrimage sites of the Vaishnava tradition.

Claude knows none of this. It can learn it if I structure the data and write the prompts, but the structuring and the prompting are the product. Kamadhenu is wish-fulfilling, but someone has to know what to wish for.

That's the real insight: we're not being replaced by the recursion. We're being elevated to the part of the stack the recursion can't reach — yet. The human is the wish-maker. We define the intent, establish the constraints, and supply the domain knowledge that gives the abundance somewhere to go.

What Comes Next

The Kamadhenu moment isn't an endpoint. It's a phase transition.

Right now, the loops require human initiation. I asked Claude to document the system. I reviewed the output. I decided it wasn't good enough. I asked again with different parameters. The human is still the clock signal in this circuit.

But the gaps between human interventions are getting wider. Eighteen months ago, I'd have been in the loop for every function, every test, every deployment step. Now I'm intervening at the architectural level — choosing what to build, not how to build it.

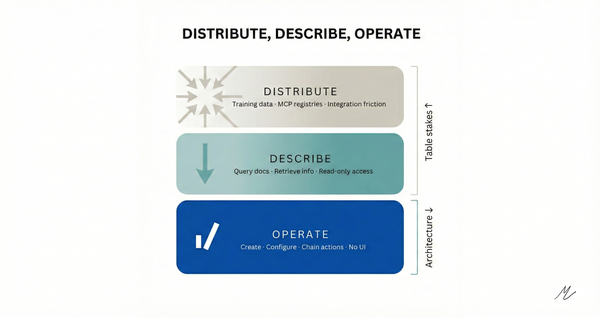

The next phase will be when the AI starts choosing the what. When it looks at the 4,000 verses and says: "You need an epithet analysis tool. Here's why, here's the schema, and here's the implementation." Not because I asked, but because it identified the gap.

We're not there yet. But we can see it from here.

That's the part I keep turning over. In the Kamadhenu myth, the cow produces whatever is wished for — but the wishes still come from the human asking. The question worth sitting with is what happens when the system starts generating the wishes too. Not a dystopia. A different kind of abundance, one where the human's role isn't eliminated but pushed further up the stack, into territory we haven't mapped yet.

And if you're a builder, the strategic question isn't whether this transition is happening — it's what part of the stack you want to own when it does. Because Kamadhenu doesn't stop producing. She just produces faster.