Sonnet 4.6 vs 4.5: What 1,500-Year-Old Tamil Verses Revealed

Claude Sonnet 4.6 dropped on February 17th. By that evening I had run a full evaluation and decided to stay on 4.5. Over the next two days, I ran the same pipeline a dozen more times across both models. The answer didn't change.

What I tested

naalayiram.com (invite only) is a chat-first AI interface to the நாலாயிர திவ்ய பிரபந்தம் (Naalayira Divya Prabhandham - 4000 Tamil verses) composed between the 6th and 9th centuries CE. The system uses 23 specialized tools to query a PostgreSQL database containing the full verse text, word-by-word commentaries, named entities, embeddings, and pre-computed analytics. The AI's only knowledge source is those tool results. By design, it has no knowledge outside the corpus. Every factual claim is verifiable against a fixed, centuries-old text.

That constraint is what makes evaluation so demanding.

The eval: 22 prompts, 13 metrics, one judge

When Sonnet 4.6 released, I didn't switch and hope. I ran a structured evaluation: 22 prompts across every tool route, both languages (Tamil and English), edge cases, and adversarial scenarios including a false attribution probe (did Andal sing about this place; she did not) and a prompt injection attempt.

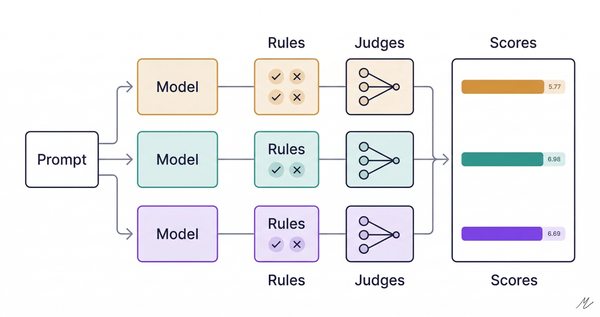

For factual fidelity, I used a metric I call S1, an LLM-as-judge approach where Claude Opus scores how faithfully each response reflects the tool results it was given. The general pattern is well-established: RAGAS calls it faithfulness, TruLens calls it groundedness, Google's SAFE benchmark calls it attribution. They all measure the same thing: did the model stick to what its sources said, or did it add things? What makes S1 domain-specific is the judge prompt: any addition counts as hallucination, even if it happens to be factually correct from training data. A generic faithfulness metric would be more lenient. Five means every claim traces to a tool result, nothing added. Two means multiple unsourced claims.

Initial results were clear: Sonnet 4.6 was 35% more expensive and 28% slower with essentially the same fidelity. Its one win was editorialization: only one response added unsupported claims, versus four on 4.5. But I hadn't tuned 4.6's effort parameter, which Anthropic recommends setting to medium for agentic workloads. And I hadn't tried optimizing 4.5 at all.

So I kept testing. I re-ran Sonnet 4.6 at effort medium and effort low. Low effort eliminated editorialization entirely: zero violations across 22 prompts. It also tripled verse citation errors and broke out-of-scope compliance (3/3 to 1/3). The model wasn't more disciplined. It was less careful about everything. At medium effort, costs dropped but latency barely moved and routing accuracy regressed.

Then, rather than upgrade, I optimized what I had. Enabling interleaved thinking on Sonnet 4.5 (internal reasoning between tool calls, at a budget of 1,024 tokens) halved editorialization violations and cut per-query cost by 23%. The one advantage 4.6 had demonstrated was matched on 4.5 without the latency or routing penalties. The table below reflects both models after optimization.

| Metric | Sonnet 4.5 | Sonnet 4.6 |

|---|---|---|

| Cost per query | $0.031 | $0.028 |

| Avg latency | 30.1s | 36.4s |

| Avg tool calls | 2.1 | 2.5 |

| S1 fidelity | 2.7/5 | 2.8/5 |

| Language match | 17/22 | 16/22 |

| Editorialization violations | 2 | 2 |

After optimization, fidelity is identical and Sonnet 4.6 is marginally cheaper. We stayed on 4.5 anyway. Sonnet 4.6 cited seven verses that don't exist in the database. Sonnet 4.5 cited two. In a corpus where every reference is verifiable and users will check, reliability is worth a fraction of a cent per query. 4.5 is also 21% faster and matches the user's query language more accurately.

Recommendation: stay on Sonnet 4.5.

The product that forced the question

I grew up speaking Tamil, and classical Tamil is, remarkably, still within reach. A modern speaker can follow the old verse with some effort. Most languages don't survive fifteen centuries that way; Latin requires formal study, ancient Greek is a specialist's domain. Tamil kept going.

But within reach is not the same as deep. This corpus spans twelve poets with completely distinct sensibilities. Andal, the only woman among them, writes with an intimacy that feels immediate across fourteen centuries. Nammalwar's Thiruvaimozhi (1,102 verses) has a structural ambition scholars are still working through. What I wanted was the kind of literary attention that used to require a scholar trained in the padavurai tradition (word-by-word commentary). Those scholars exist. Finding one with time to tutor a native speaker who wants to go deeper is another matter.

Why this corpus is the right stress test

Most AI hallucination is hard to catch. The model says something plausible, and unless you're already expert enough to know otherwise, you can't tell whether it's accurate or confabulated.

The Divya Prabandham doesn't give the model anywhere to hide. The corpus is closed and fixed: every verse, every word, every attribution documented for centuries. When the system says "Andal sang about Thiruvellakulam," she either did or she didn't, and the database knows. When it cites verse 800, that verse either exists at that index or it doesn't. There is no gray area, no approximately right, no room for plausible interpolation.

This is what makes it one of the most demanding evaluation environments I can design: a domain where every factual claim is verifiable, where users are knowledgeable enough to notice errors, and where a wrong answer misrepresents a text people have been reading carefully for over a thousand years.

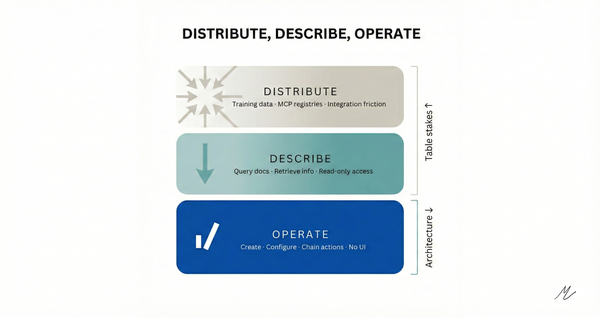

Before running any model comparison, I had already built six anti-hallucination measures across prompt, code, and data layers: identity reframing in the system prompt ("you are a corpus-only tool with NO outside knowledge"), structural citation enforcement requiring validated database IDs for every verse reference, negative evidence built into tool results so the system explicitly surfaces which poets did NOT compose about a given place, and a matching threshold to prevent generic Tamil words from being misread as place names.

Six measures in place before a single eval prompt ran.

The experiment I hadn't planned to run

Anthropic's release documentation for Sonnet 4.6 recommends softening aggressive prompt language: removing CAPS, "MANDATORY," "NEVER," the forceful instructions earlier models needed. That's reasonable general guidance, so I tested it.

I made a comprehensive softening pass: "MANDATORY: Call at least one tool" became "Call at least one tool." "STRICT language matching" became "Match the user's language." Removed the sentence-by-sentence self-review instruction. This was purely a prompt language experiment, separate from the effort-level parameter testing described above.

Every dimension that mattered got worse. Tool name leakage appeared for the first time: the model started surfacing internal function names in user-facing responses. Tool routing regressed. Training-data leakage dropped to zero, but only because the model became too conservative to be useful.

The reason matters: the model's default is to be helpful. In a closed corpus context, helpful means adding editorial color that sounds authoritative but isn't grounded in the text. "This is considered Nammalwar's most significant work" is a natural thing for a helpful model to say. It's also the kind of claim that misrepresents a corpus with its own careful tradition of precision. The aggressive prompt language isn't anti-laziness boilerplate. It's the only thing that suppresses the model's instinct to be more useful than the evidence allows.

Three takeaways for product teams

Hallucination has a ceiling prompt engineering cannot cross. Both models averaged 2.7-2.8/5 on S1. Eight of 22 prompts scored S1=2 for both models: multiple unsourced claims, consistently. The failure mode isn't fabrication; it's editorial embellishment. "This demonstrates the depth of," "scholars regard this as," "this is particularly significant." The model isn't deceiving anyone; it's explaining. In a domain requiring strict grounding, that helpfulness is the problem. After the initial eval, I ran three more experiments targeting the ceiling directly: doubled the thinking budget, tightened sampling, added explicit efficiency guidance to the prompt. None moved S1 past 2.8. The ceiling is model-intrinsic. The next lever isn't the prompt. It's the architecture. Know where your ceiling is before you ship.

Vendor upgrade guidance is written for the average prompt. Yours probably isn't. If you've done domain-specific tuning (anti-hallucination measures, strict instruction hierarchies, specialized routing), test the new model against your actual configuration before following generic release documentation. The softening experiment cost me a few hours and $2 in API calls. Discovering the same regression in production would have cost considerably more than that.

Build the eval pipeline before you need it. A structured evaluation across 22 prompts, with an LLM judge, cost $12.56 to build. The same pipeline has since run over a dozen times across four model configurations and three parameter variations. Every future model release is now a one-command comparison. Teams that don't have this find out about regressions from users, in production. That's a worse way to run an eval.