Introducing Tisram

Last Tuesday I opened my inbox to 247 unread items tagged "AI." Newsletters, RSS feeds, research drops, podcast transcripts, analyst notes. By Wednesday morning there would be another 200. I have been tracking this number for months. It does not go down. If anything, the production rate of AI commentary is itself accelerating faster than the capabilities it describes.

This is not a humble brag about being plugged in. It is the opposite. For most of the past year I have felt like I am losing a race that I did not sign up for, against a firehose that has no off valve. And I suspect most people working in or around AI feel the same way, even if the specific number is different. Maybe yours is 50 items a day. Maybe it is 500. The feeling is identical: the quiet anxiety that you are missing the signal in the noise, that somewhere in the pile is the article that would have changed how you think about a problem, and you will never find it because it is buried under seventeen hot takes about the same funding round.

The standard responses to this are not satisfying. Algorithmic feeds optimize for engagement, which in practice means they surface what is emotionally activating, not what is analytically useful. Newsletter curation helps but introduces a single editor's blind spots. "Just focus on fundamentals" is good advice for 2020, less useful when the fundamentals themselves are shifting monthly. And the honest truth is that most people, myself included, default to a kind of anxious skimming: scanning headlines, reading the first paragraph, bookmarking for later, never returning.

I wanted to try something different. Not a better feed, not a smarter filter, but a fundamentally different relationship with the information itself.

The thesis is simple, maybe too simple: you do not need to read everything. Three reads at a time, chosen with real care and analyzed with real depth, will compound into more understanding over months than anxious skimming ever could. Knowledge works like compound interest. Consistent small deposits of genuine insight, connected to what came before, build on each other in ways that volume alone cannot replicate.

This is not an original observation. Any researcher knows that reading five papers deeply teaches you more than reading fifty abstracts. But the question I kept getting stuck on was operational: how do you actually identify which three, out of hundreds, deserve the deep read today? Not which three are most popular, or most recent, or most likely to generate a take on social media. Which three, in the context of everything you have read before, will connect to the most and reveal the most about where things are heading?

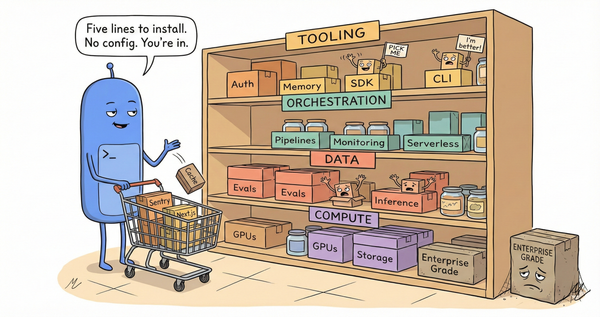

That question led to two things I built over the past year. The first is gyanagent.ai, an agentic AI system I built for my own use. Ten specialized agents ingest content from Gmail subscriptions, RSS feeds, podcast transcripts, YouTube channels, and research repositories. They categorize, summarize, tag, extract entities, and score relevance. The system has processed over 19,000 sources to date. Its job is not to tell me what to think. Its job is to reduce 247 inbox items to a working set of 20 or 30 that are plausibly worth my time, with enough metadata attached that I can make informed decisions about which ones to actually read.

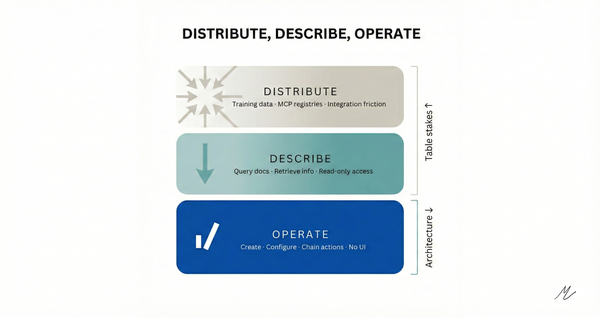

The second thing is tisram.ai, the public-facing layer where the curation becomes visible.

Tisram is a public research graph. When I publish, it is exactly three items. Might not every day, only when I find three things worth the depth. Each one includes the source, a 2-3 sentence analytical take (not a summary, an argument), a set of tags from a controlled vocabulary, a list of entities (companies, people, technologies, concepts), optional thread assignments that track multi-day research trajectories, and semantic connections to previously published items discovered through vector similarity search. The name is derived from the rhythmic grouping of three units per beat in Indian classical music.

The constraint of three is not arbitrary, though I will admit it started that way. It emerged from a few months of experimentation with different numbers. One per day was too sparse to find patterns. Five was enough that I started making compromises on analytical depth to hit the number. Three forces a genuine editorial decision every day: not "what happened" but "what matters, and why does it connect to what we already know?" The grouping itself carries information. When three articles from different sources and different domains land together, the juxtaposition often reveals something that none of them stated individually.

Take a recent example. One day's triplet included Oracle and OpenAI scaling back a flagship data center expansion, a profile of "powered land" prospectors creating a new real estate intermediary class for AI infrastructure, and The Economist arguing that AI data centers are scapegoats for electricity price increases actually driven by decades of deferred grid investment. Three different publications, three different beats, three different framings. But grouped together, they tell a single story about the AI infrastructure boom simultaneously contracting at the top, spawning extractive intermediaries in the middle, and being misblamed for structural failures at the bottom. That pattern is invisible if you read any one of those articles in isolation. It only appears when you place them next to each other and ask what they share.

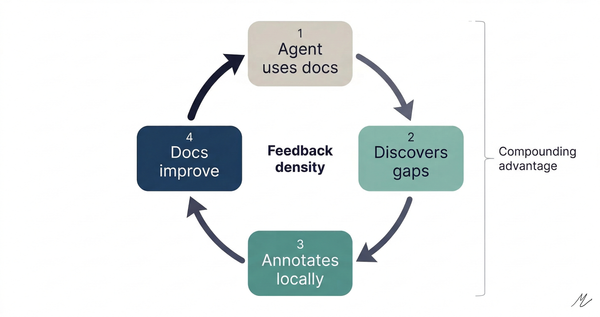

This is where the graph structure starts to earn its keep. Each item is not a standalone post. It is a node. Entities like OpenAI, Oracle, Meta recur across entries, creating implicit longitudinal tracks. Tags like "competitive-dynamics" and "ai-infrastructure" create categorical clusters. Threads like "ai-economics" or "ai-1.0-defensibility" are explicit multi-day research trajectories that I name and maintain. And semantic connections, discovered automatically through embedding similarity against the full published corpus, surface relationships I did not anticipate. The graph grows denser over time. Patterns that were invisible after a week become legible after a month.

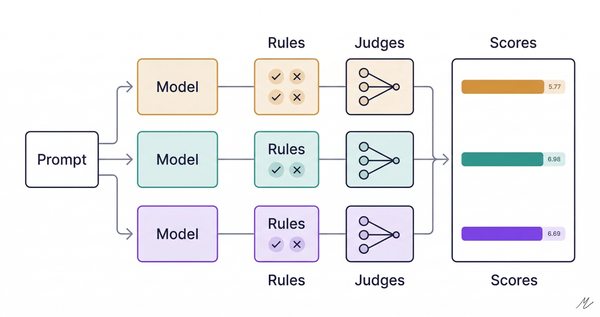

The workflow behind this is a collaboration between AI and human judgment that I think illustrates something important about where the real value sits in these systems. The AI side handles what it is genuinely good at: ingestion at scale (no human reads 19,000 sources), entity extraction, categorization against a taxonomy, embedding and similarity search, and even scoring candidate triplet groupings using an LLM-as-judge that evaluates narrative coherence, tag overlap, and thematic diversity. There is an algorithm that evaluates all possible three-item combinations from the queue and ranks them by how well they would work together as a daily entry. The AI is doing combinatorial analysis that would be impractical by hand.

But the human side handles everything the AI cannot. Which articles actually contain a novel insight versus a competent restatement of conventional wisdom. Whether a source is positioning (BCG publishing research about AI cognitive load that happens to recommend organizational change management, which is, of course, BCG's core business). What the analytical take should be, the argument that connects the article to the broader landscape, the buried signal that the article itself may not have surfaced. The quality bar. The editorial voice. These are judgment calls that require taste, domain knowledge, and a willingness to be opinionated. The AI proposes; the human disposes, refines, and adds the layer of analysis that makes it worth reading.

Neither side alone produces the result. I cannot process 19,000 sources. The AI cannot write "The buried signal is that autonomous agents requiring less oversight may produce better human outcomes than copilot patterns requiring constant attention, running directly counter to 'human in the loop' orthodoxy." That take requires reading the BCG survey, understanding the methodological limitations of cross-sectional self-report data, knowing what the "human in the loop" debate actually looks like in practice, and being willing to name the tension. The AI found the article. The human found the insight.

I want to be honest about the wrinkles because I think the complications are more interesting than the clean narrative.

First, the three-per-day constraint means I am constantly leaving potentially important things on the table. The queue is always longer than three. The grouping algorithm helps, but it optimizes for coherence within a triplet, not for global coverage of the field. There are topics I know I am underweighting because they do not group well with other items on a given day. This is a real cost, and I have not solved it.

Second, the knowledge graph is young. Five days of entries, fifteen items. The connection density is low. The semantic search finds meaningful links, but the corpus is small enough that the connections are obvious ones. The promise of emergent patterns revealing themselves over months is exactly that, a promise. I believe it because I have seen it work in smaller private experiments, but the public graph has not yet reached the scale where it becomes independently interesting as a dataset rather than as a daily publication.

Third, the analytical takes are opinionated by design, and opinionated means sometimes wrong. I have already had to revise my thinking on a few takes as subsequent reporting provided additional context. The graph does not have a built-in correction mechanism. An item from Day 3 might connect to an item from Day 1 that I would now frame differently. This is a design flaw I am thinking about.

Fourth, the AI pipeline is imperfect in ways that matter. Entity extraction misses things. Categorization is inconsistent at the margins. The semantic similarity threshold for connections is a knob I have had to tune repeatedly, and the right setting is genuinely unclear. These are engineering problems, not conceptual ones, but they affect the quality of the output in ways that are hard to see from the outside.

What I am more confident about is the underlying bet: that consistent, connected, opinionated curation produces a kind of understanding that neither firehose consumption nor passive algorithmic filtering can match. The graph is a forcing function. It forces me to decide what matters every day. It forces me to articulate why. It forces connections to prior work. And it creates a public record that I can revisit, challenge, and build on.

Tisram is one piece of a larger ecosystem we are building at Turanu AI, alongside evalrig.ai for AI evaluation infrastructure. The name Turanu comes from Turing and Ramanujan, computation and intuition, rigor and pattern recognition. That pairing captures something I keep returning to in this work: the most interesting results happen at the boundary between what machines compute and what humans perceive. Not in replacing one with the other, but in finding the seam where they reinforce each other.

I do not know if three is the right number for everyone. I do not know if the knowledge graph structure will prove more useful than a well-maintained notebook or a good memory. What I do know is that after several months of this practice, I understand the AI landscape differently than I did when I was skimming 247 items a day and retaining almost none of them. The compound interest is real. It is just slow, and it demands that you keep making the deposits.

Tisram is live at tisram.ai. Knowledge compounds three nodes at a time.