Flaws as Features: What Goal-Aware Inference Might Look Like

TL;DR: If AI assistants can reinterpret product flaws as features based on your specific goals, that changes everything. When Gemini recommended socks that hikers panned (loose elastic is bad for hiking but good for circulation), it demonstrated what could be goal-aware inference. If this capability scales, it threatens the $200B retail media industry.

Last week I made a purchase decision in ten minutes, while driving, in a voice conversation with Gemini. Research, evaluation, brand selection. The entire process.

I needed sleep socks for cold New England nights. Gemini suggested merino wool and surfaced a few options. I asked it to evaluate one. It read the reviews, identified the fit characteristics, and explained why it matched my needs. By the time I parked, I knew what I was buying.

But the interesting part wasn't the convenience. Gemini recommended a product with some specific complaints.

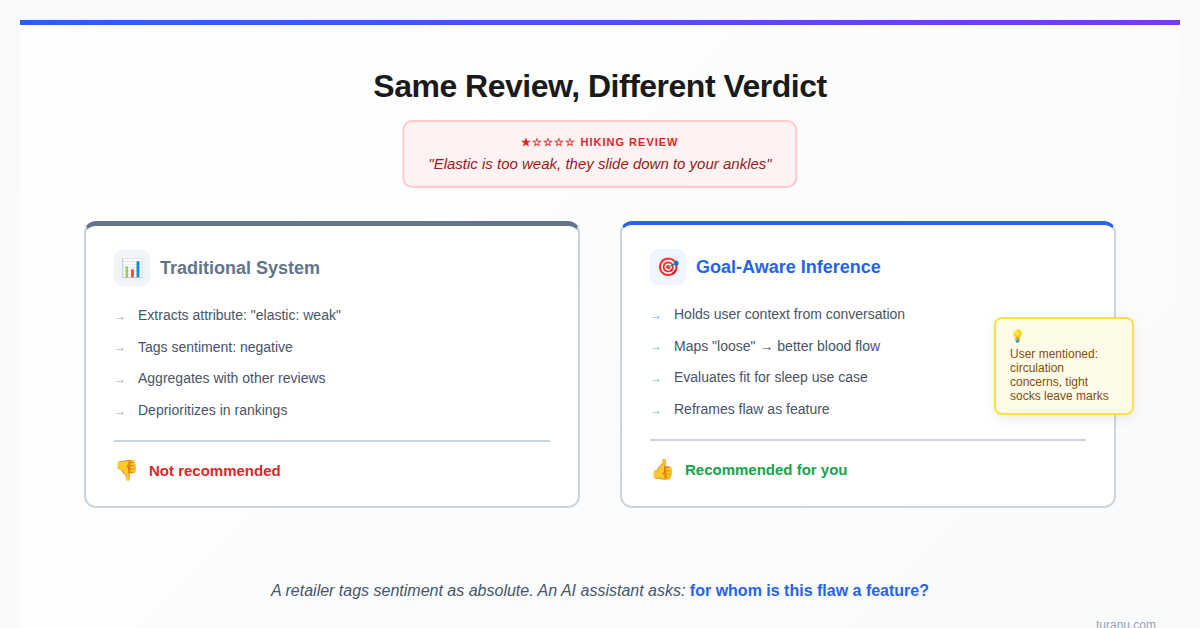

The Annsuki merino wool socks had one and two star reviews from some disappointed hikers: the elastic is too weak, they slide down to your ankles, they're too loose for serious use. Gemini read those complaints and told me to buy them anyway: "For a hiker, that's a 1-star review. For you, wanting to maintain blood flow while you sleep, that is a 5-star feature."

That's inference about use-case fit, not just retrieval.

I want to be careful not to overclaim. I observed this in one conversation. I don't know if Gemini consistently does this, or if I got lucky with a prompt sequence that happened to elicit useful reasoning. But if this is what's actually happening (if the model is genuinely reinterpreting product attributes through the lens of user goals) that's what I'd call goal-aware inference. The question is whether it's a reliable capability or an emergent behavior I happened to catch.

The Difference Between Finding and Evaluating

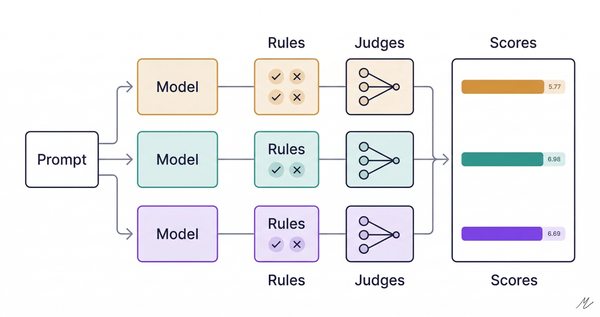

Traditional recommender systems prioritize pattern matching. Users who bought X also bought Y. Products with high aggregate ratings in category Z. Keyword overlap between query and description. A ranking algorithm that weights aggregate sentiment would likely deprioritize a product with recurring complaints about looseness.

What the assistant did was different. It took information that was nominally negative and recognized that the negativity was context-dependent. The same attribute that makes a product bad for one use case makes it good for another.

Loose elastic = bad for hiking, good for circulation.

Slides down = bad for boots, irrelevant for bed.

Not snug = failure for athletes, feature for sleepers.I had mentioned early in the conversation that I was concerned about blood flow, that crew socks felt too tight, that I wanted something that wouldn't leave marks on my legs. The assistant held onto that context across multiple exchanges, and when it encountered the hiking reviews, it mapped their complaints onto my requirements.

If the assistant is doing what it appears to be doing, that's goal-aware inference: transforming data based on user intent, not just retrieving data that matches user keywords.

The socks arrived and they work: they stay on without being tight, and I wake up without red marks. The "flaw" was indeed a feature for my use case.

So Gemini's inference worked. It wasn't hallucination dressed up as insight. The assistant appears to have made a genuine prediction about product-use-case fit, and reality confirmed it.

Why This Matters Beyond Socks

The flaw-to-feature translation has implications for how AI systems evaluate products and services.

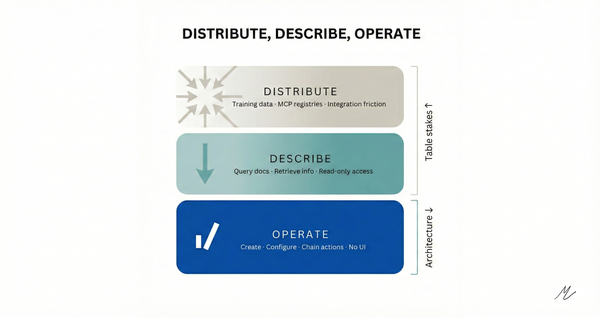

The system needs to maintain context about the user's actual goals, not just their search terms. Product attributes exist on spectrums, not as binary good/bad. And the system needs to reason about the relationship between attributes and use cases. That's more sophisticated than "show me items with high ratings that match these keywords." It's the difference between filtering and reasoning.

Negative reviews contain more signal than we typically extract. A negative review is often a story about mismatched expectations. The product did something the reviewer didn't want. But someone else might want exactly that thing.

An AI assistant that can read a negative review and ask "who would actually want this?" is doing something useful. It's finding the audience that the product accidentally serves well, even if that's not the audience it was designed for.

There's a wrinkle worth naming: this only works because the assistant remembered what I cared about. Earlier in the conversation, I'd explained my actual goal. A stateless system processing each query independently would have missed the connection entirely.

There's an architectural reason this is hard for retailers to replicate. A retailer's review system can tag attributes ("elastic: weak") and sentiment ("1-star"). What it can't tag is for whom the weakness is a feature. That requires maintaining a model of each user's actual goals. An LLM-based assistant can build that model naturally through conversation. Personalized attribute interpretation is baked into the architecture.

There's another structural advantage worth naming: Gemini seems to have access to reviews across platforms: REI, outdoor blogs, Reddit threads, wellness sites. If that's the case, the same product gets evaluated differently by different communities. A hiker on REI calls loose elastic a flaw. A circulation-focused reviewer on a sleep blog calls it a feature. An assistant that can read across sources can triangulate in ways a single retailer's review system never could. Amazon only sees what's inside Amazon.

The Stakes Are Higher Than Product Recommendations

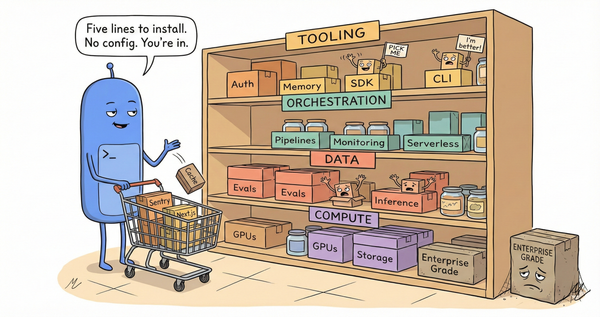

This pattern scales beyond my sock purchase. When an AI assistant can reinterpret reviews through the lens of individual user goals, the entire evaluation phase of commerce shifts.

Amazon generates roughly $56 billion a year from advertising, capturing nearly 80% of a retail media industry that research firms estimate at almost $200 billion annually. That business depends on people browsing their site, seeing sponsored placements, getting nudged by recommendations. An AI assistant that bypasses all of that and finds the best product for the user, including products with "bad" reviews that are actually good for that specific user? That threatens the model.

Retailer behavior tells you they understand what's at stake. In November 2025, Amazon sued Perplexity, alleging that their Comet AI assistant violated terms of service and degraded a shopping experience Amazon had spent decades building. Perplexity called it a "bully tactic." Amazon has also blocked OpenAI-related crawlers via robots.txt. The message is clear: if you want our data, you stay inside our house.

But the lines are being drawn the other way too. At the National Retail Federation conference in January, Google announced its Universal Commerce Protocol, an open standard enabling AI agents to handle checkout directly within Gemini and Search. The launch partners: Walmart, Target, Shopify, Wayfair, Etsy. Notably absent: Amazon. OpenAI launched Instant Checkout in ChatGPT last September. Microsoft shipped Copilot Checkout in early January with Shopify, PayPal, and Stripe.

The behavioral data supports the shift. According to Similarweb, Walmart's share of AI referrals doubled to 32.5% over the course of 2025. Amazon's share dropped from 40% to under 11%. Amazon remains dominant in overall engagement, but the referral trend shows where early-adopter traffic is moving.

Most major retailers are choosing partnership over resistance. Amazon is betting it can go it alone. Even some of Amazon's own third-party sellers see the gap. Vendors who would welcome traffic from external AI agents note that Amazon's Rufus captures demand but can't compete with agents that operate across multiple marketplaces. Any retailer becomes one row in a table.

The Limitation

This worked because the inference was verifiable. I could check whether the socks were actually loose. I could confirm whether my feet felt better. The prediction had a testable outcome.

For more complex products or subjective evaluations, this kind of reasoning gets harder to trust. If an assistant told me that a laptop's "slow boot time" was actually a feature because it gave me time to prepare mentally for work, I'd be skeptical. The flaw-to-feature translation needs to be grounded in actual causal relationships, not just clever reframing.

The socks worked because circulation and looseness are genuinely related. Tight elastic bands create constant pressure on the calf, which can restrict blood flow and leave marks on the skin. Loose elastic means less pressure means better circulation while sleeping. The assistant wasn't spinning. It was connecting real attributes to real outcomes through an actual causal chain.

That's the line to watch. Goal-aware inference is powerful when it's grounded. It's less useful when it's not. The difference between insight and rationalization is whether the underlying logic holds up to scrutiny.

What I'm Watching For

Long-context models that hold onto user preferences across an entire conversation should be better at this kind of inference. They have more information about what the user actually needs, which means they can evaluate product attributes more accurately.

This might be one of the places where AI assistants create value beyond convenience. Not just finding products faster, but finding products that a keyword search would have excluded. Products that look wrong on paper but are right in context.

A retailer's review system tags sentiment as absolute: positive or negative. An assistant that understands use-case fit can see that the same review is both, depending on who's reading it. That's a different kind of product intelligence. And it explains why the industry is scrambling to figure out where it fits.

I bought socks that some hikers panned. They're perfect for sleeping. The assistant figured that out before I did, which raises the harder question: when do you trust an AI telling you the flaws are features?