Don't Forget Your Design (Patterns)

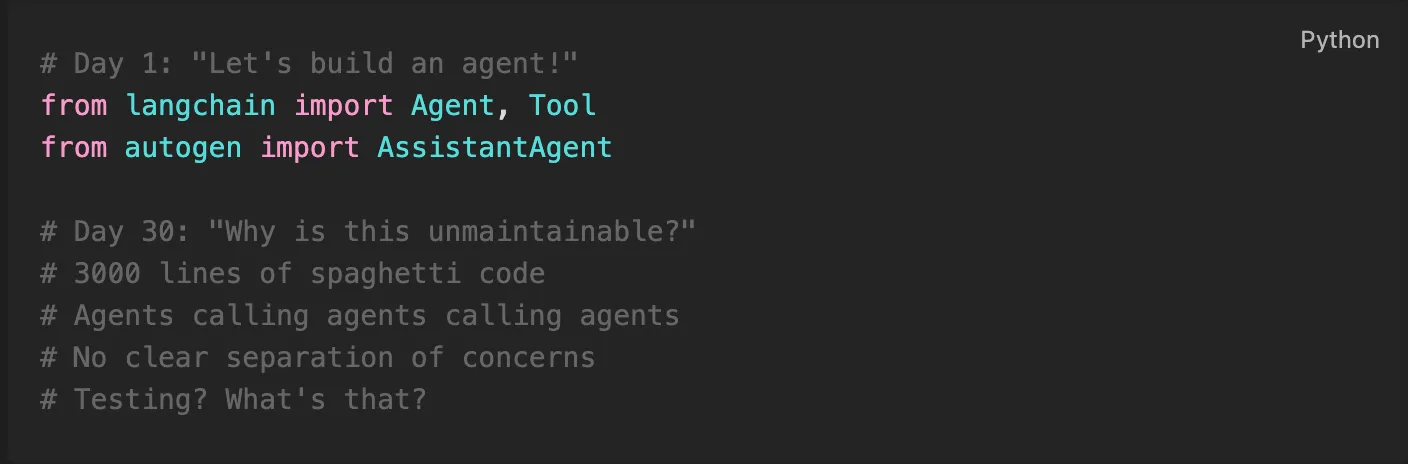

If you’ve been building AI agents lately, you’ve probably seen this pattern (pun intended):

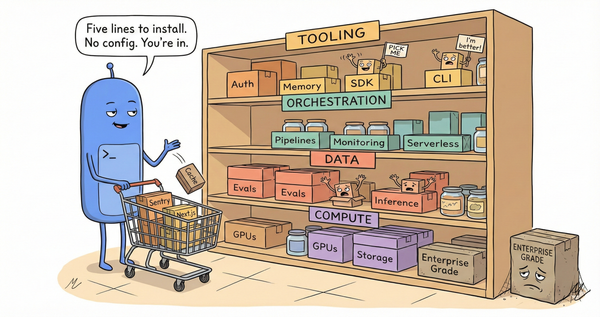

We’re in the middle of an Agentic AI gold rush. LangChain, AutoGen, CrewAI, and dozens of other frameworks promise to make building AI agents as easy as importing a library. And they do - until your system needs to scale beyond the tutorial examples.

Here’s the uncomfortable truth: Building an Agentic AI system without design patterns is like building a distributed system in 2010 without understanding REST, microservices, or message queues. You’ll ship something. It will work. And then it will become unmaintainable.

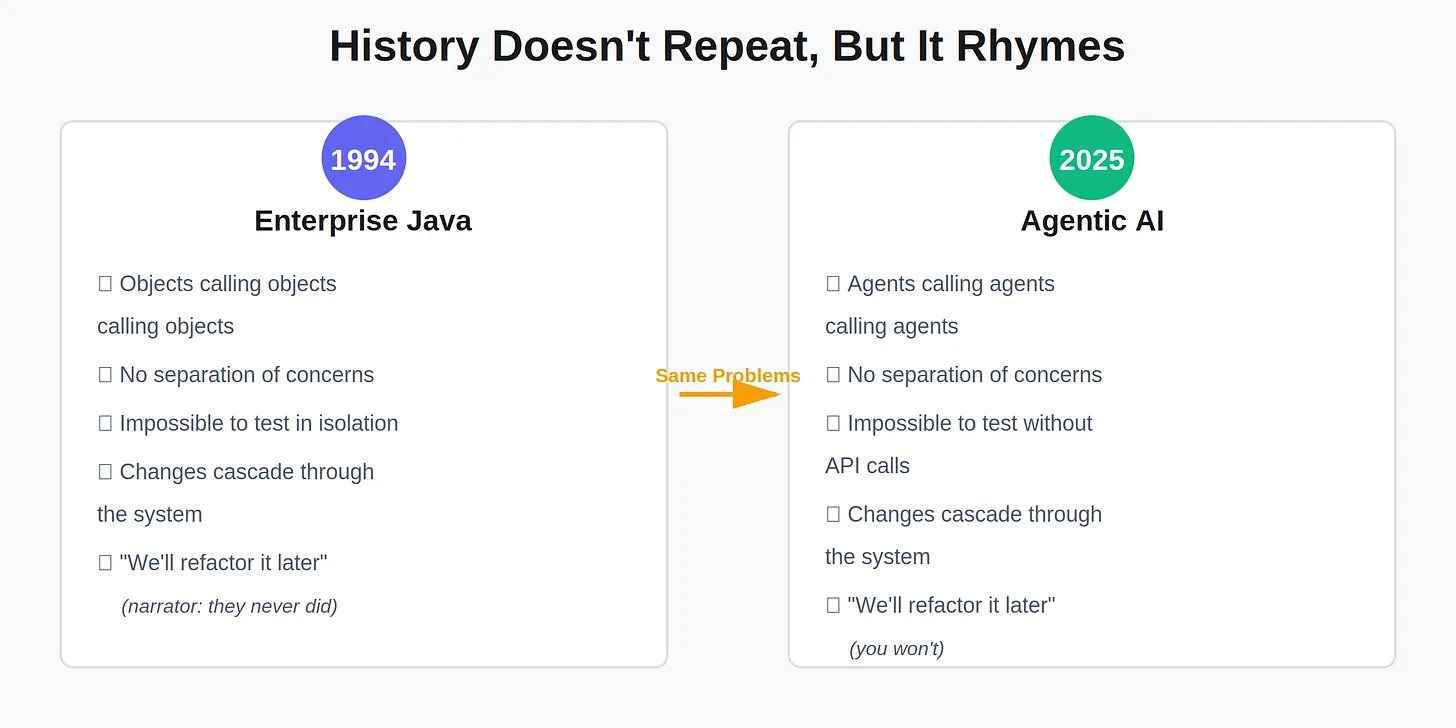

The exact same architectural problems that plagued enterprise Java in the 90s are plaguing Agentic AI in the 2020s. We just don’t recognize them because we’re distracted by the LLM calls.

History Doesn’t Repeat, But It Rhymes

Here’s the pattern you might have missed:

The chaos you’re experiencing building Agentic AI systems in 2025 is identical to the chaos Java developers experienced building enterprise systems in 1994. Different technology, same architectural problems.

The Gang of Four published Design Patterns: Elements of Reusable Object-Oriented Software in 1994 specifically to solve these problems. Thirty years later, we’re rediscovering why those patterns mattered.

The insight: Strip away the LLM calls, and agentic AI systems are just distributed systems with probabilistic compute primitives. The architectural challenges - service abstraction, algorithm selection, job persistence, lifecycle management, data storage - aren’t AI problems. They’re software problems. And they were solved three decades ago.

The Missing Foundation

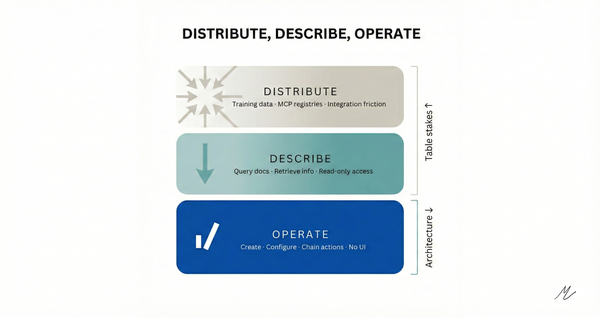

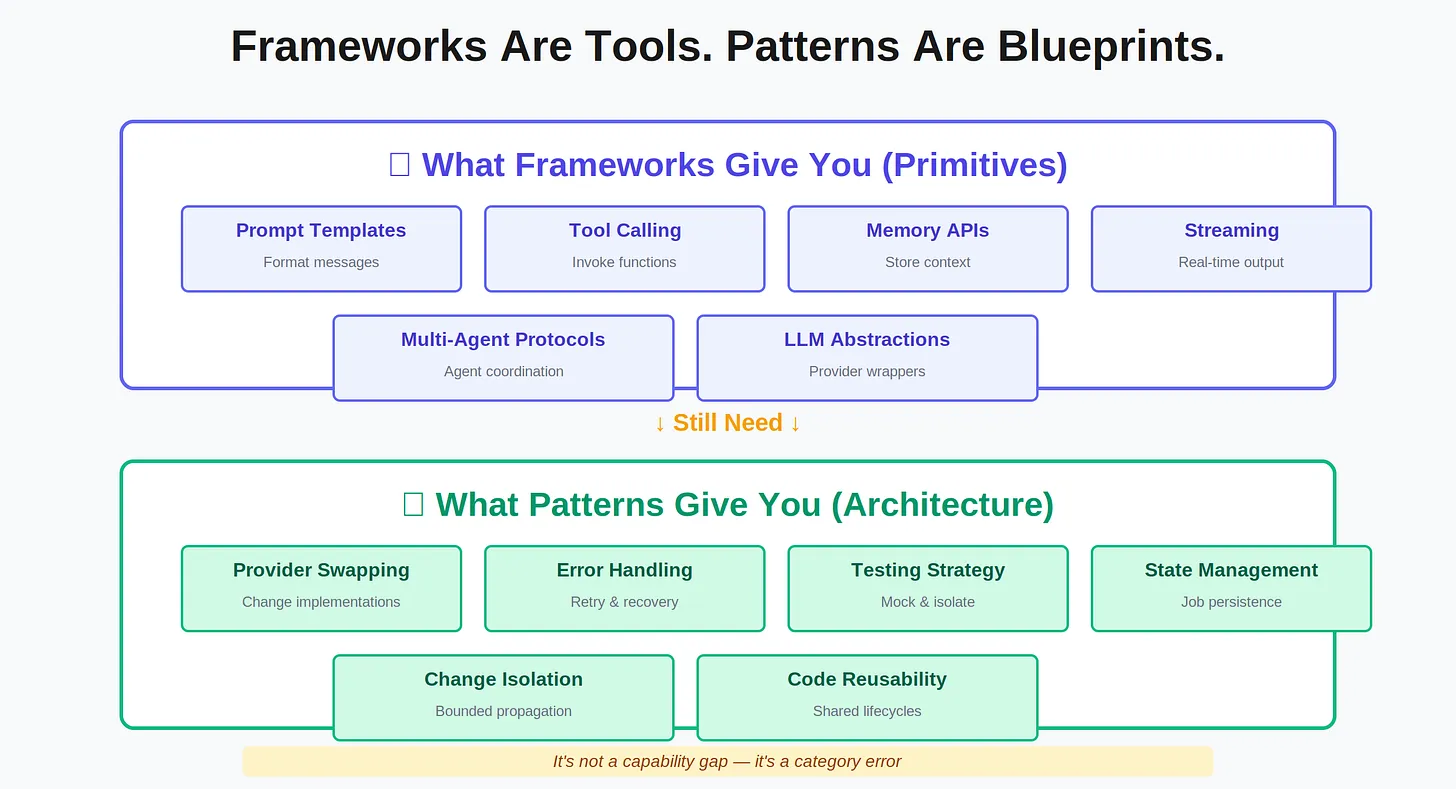

Agentic frameworks give you orchestration primitives:

- Prompt templates

- Tool calling interfaces

- Multi-agent communication protocols

- Memory abstractions

- Streaming responses

What they don’t give you is architectural discipline.

When your agent system needs to:

- Swap LLM providers without rewriting every agent

- Retry failed operations with exponential backoff

- Scale from 10 jobs/day to 10,000 jobs/day

- Add new content sources without touching existing code

- Migrate from SQLite to Postgres without breaking agents

- Test components in isolation

... you discover that “import AutoGen” doesn’t magically solve software architecture problems.

Why Frameworks Can’t Give You Architecture

It’s not a criticism - it’s a category error.

LangChain’s job: “Here’s how to call LLMs, chain prompts, and manage memory”

Architecture’s job: “Here’s how to structure code so changes don’t cascade”

Asking LangChain to provide architecture is like asking Express.js to provide microservice patterns. Express gives you routing; you still need to decide on:

- Service boundaries

- Data flow

- Error handling strategy

- Testing approach

- State management

Frameworks are tools. Patterns are blueprints.

You can build a house with just tools (hammer, saw, nails). But without blueprints, you’ll end up with rooms that don’t connect, plumbing that crosses electrical, and a structure that collapses when you try to add a second floor.

This isn’t LangChain’s fault. It’s doing exactly what it should: providing primitives. Your job is to compose those primitives into maintainable systems. That requires architectural thinking, not just framework knowledge.

Design Patterns: The Foundation Under the Framework

Let me show you what this looks like in practice, with real example from GyanAgent - a personal knowledge management system powered by autonomous agents. It ingests content from YouTube, podcasts, newsletters, RSS feeds, and web articles, then uses AI to cluster insights and surface what matters.

The result: 11 classical Gang of Four design patterns working in harmony beneath the LLM calls.

Not because I am pattern purists. As a matter of fact, I didn’t even go with a pattern-first approach. As I built the product, one pattern after another came in as a natural fit for the design challenge at hand.

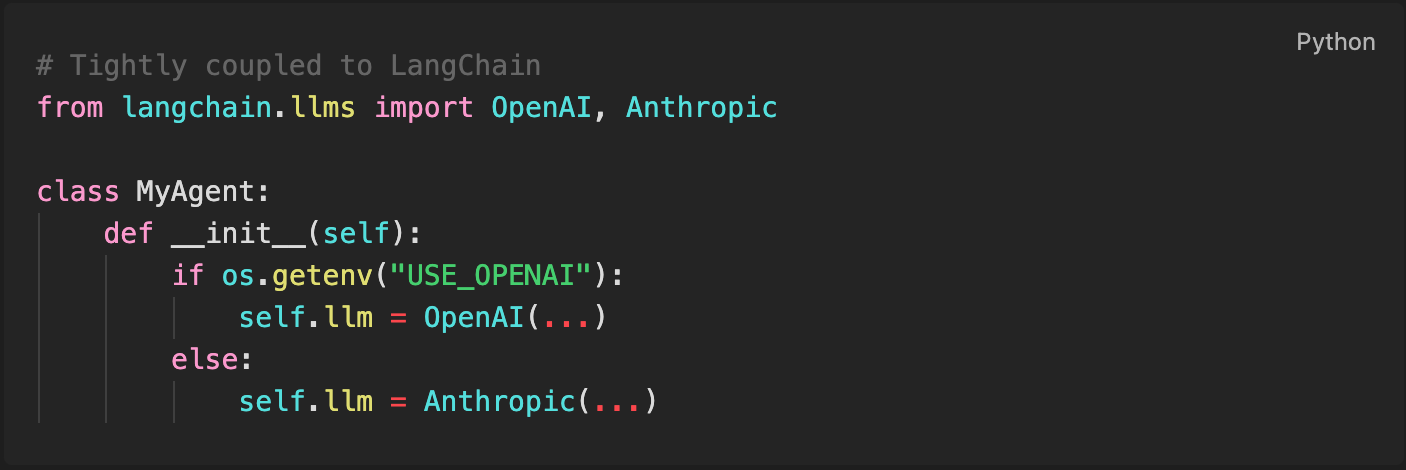

Example 1: Factory Method vs. Framework Lock-In

The Problem: I needed to support multiple LLM providers (OpenAI, Anthropic, local Llama models) and multiple ASR engines (Faster-Whisper, MLX-Whisper) without each agent knowing which implementation it’s using.

Framework-first approach:

Problem: Every agent needs branching logic. Swapping providers means touching every file.

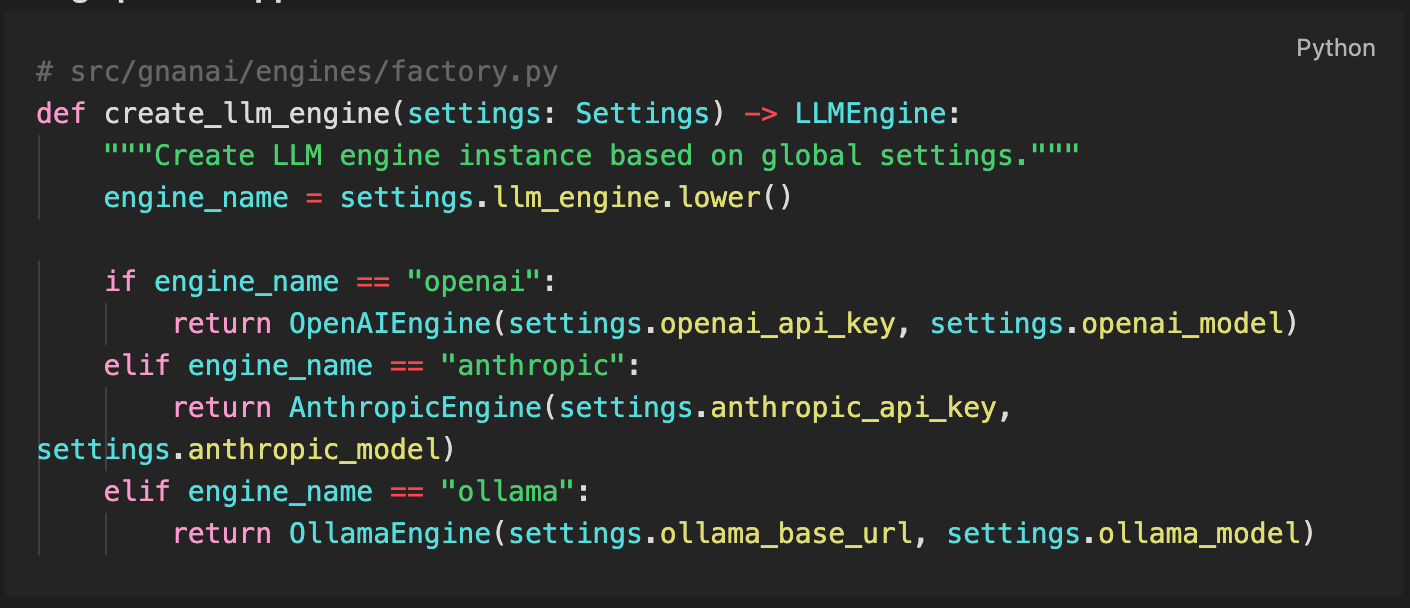

Design pattern approach:

Impact: I’ve swapped LLM providers 12+ times during development. Each swap: change one environment variable, restart. Zero code changes.

When I wanted swap to MLX-Whisper (GPU-accelerated transcription for Apple Silicon), I added it in 80 lines of code without touching any agent logic. Transcription got 2x faster. Agents didn’t notice.

Example 2: Template Method vs. Copy-Paste Agent Code

The Problem: We needed multiple maintenance agents (network monitoring, archive cleanup, quality checks) that all follow the same observe → decide → act → reflect lifecycle but do different things at each step.

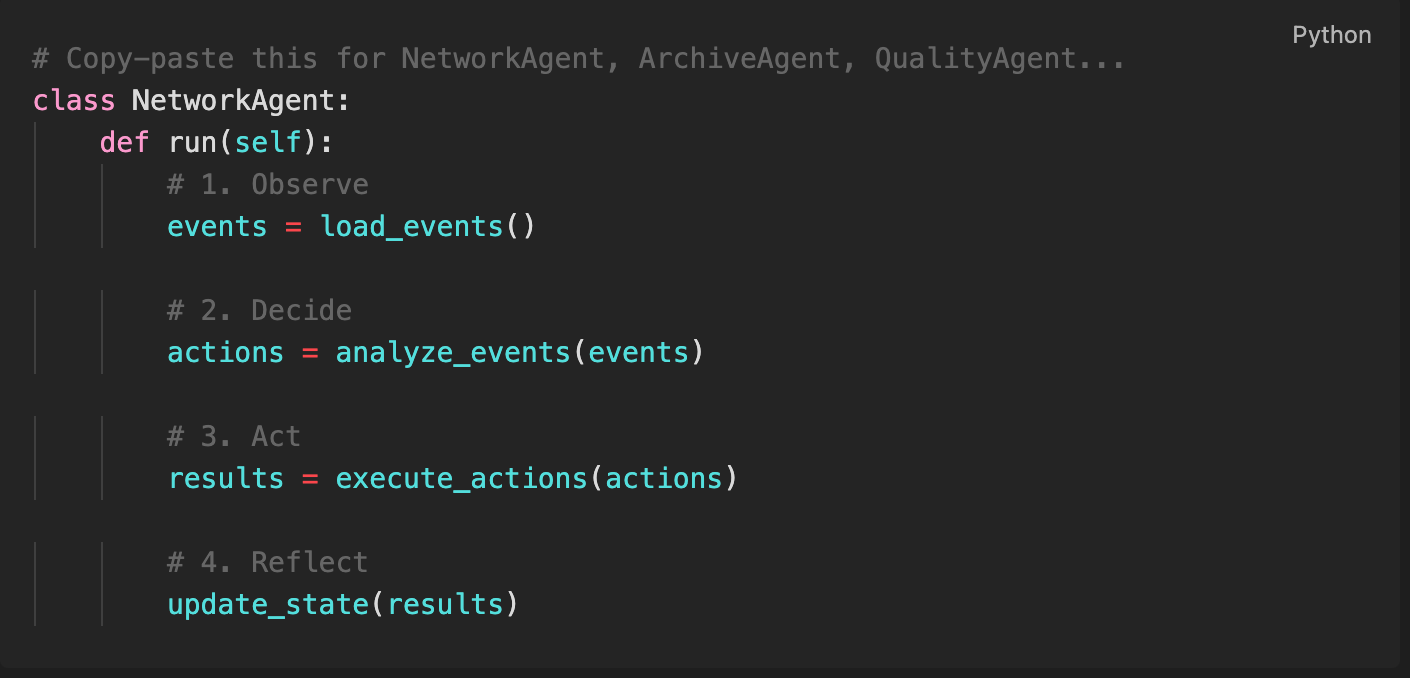

Framework-first approach:

Problem: Bugs in the lifecycle get copied across all agents. Adding telemetry means updating N files.

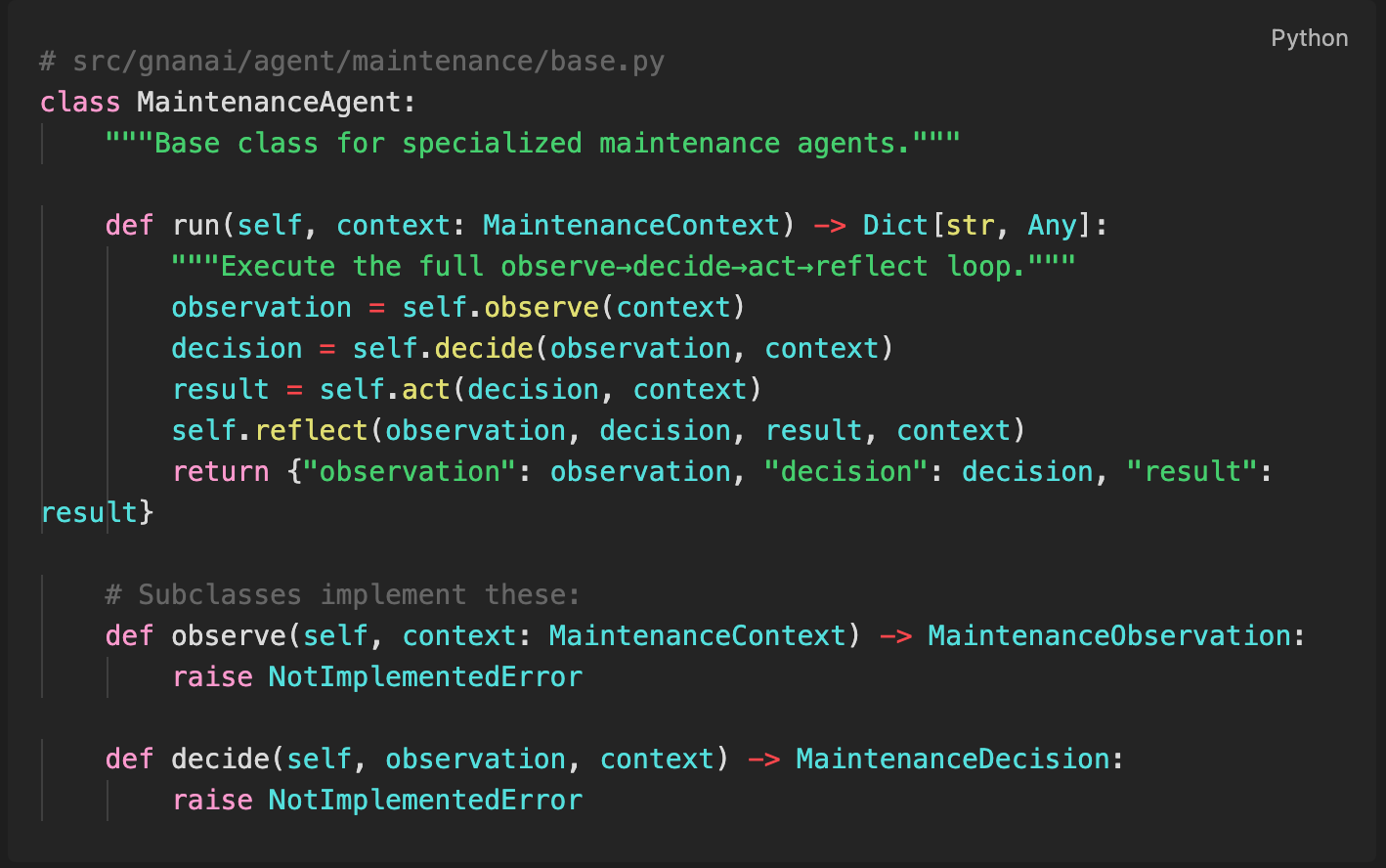

Design pattern approach:

Impact: When I added the Network Watcher agent, it took 120 lines of domain logic. The observe → decide → act → reflect orchestration? Already done. Logging? Already done. Error handling? Already done.

Adding telemetry to all agents: one line in the base class.

Example 3: Strategy vs. Hard-Coded Algorithms

The Problem: Clustering 200 daily insights requires different algorithms depending on data quality—HDBSCAN for clean data, agglomerative clustering for salvage passes, metadata-based fallbacks when embeddings fail.

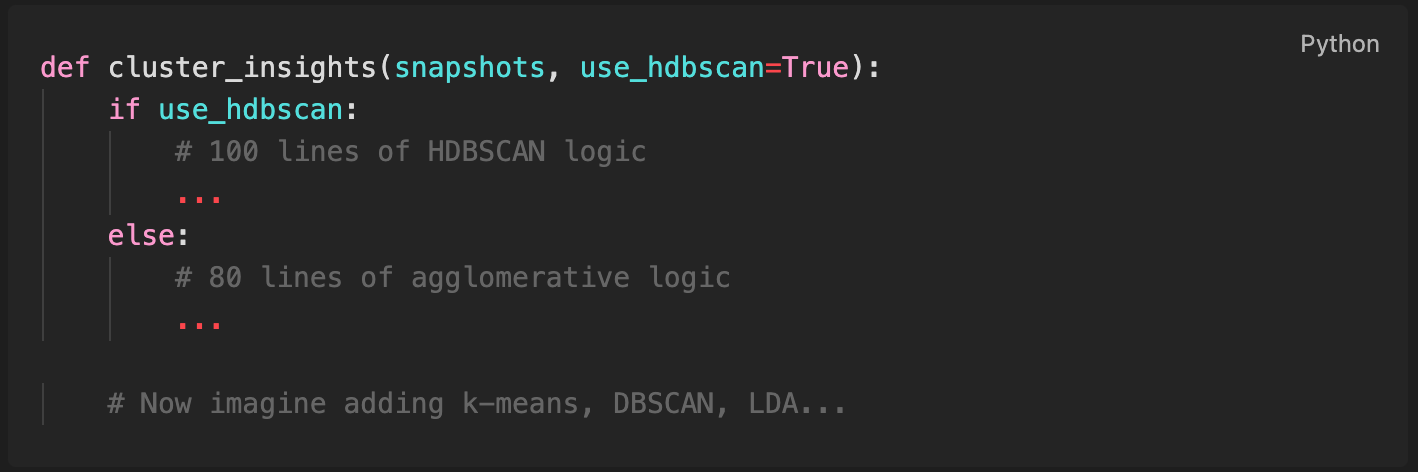

Framework-first approach:

Problem: The function grows unbounded. Testing individual algorithms requires mocking the entire pipeline.

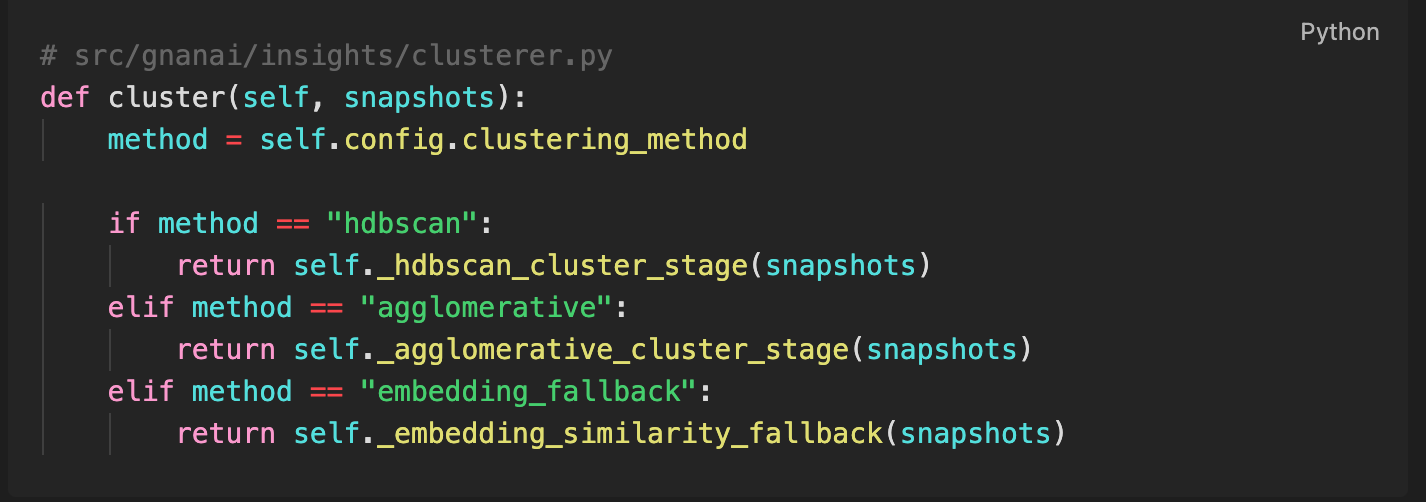

Design pattern approach:

Impact: I’ve tuned clustering parameters across 47 runs. Each experiment: change one YAML value in config.yaml. No code deployments. No restart required (config hot-reloads).

When I discovered cosine distance worked better than Euclidean for embeddings, I added it as a strategy. Old runs remained reproducible. New runs got better quality. Both strategies coexist for A/B testing.

“Aren’t Design Patterns Outdated?”

Yes - for the problems they were overused for.

GoF patterns got a bad reputation because Java developers used them everywhere:

- SingletonFactoryManagerProvider (7 layers of abstraction for a config file)

- AbstractStrategyFactoryBean (patterns for the sake of patterns)

- Enterprise architectures with 40 interfaces for 3 implementations

The Java community applied patterns speculatively - ”we might need to swap this someday, so let’s add 6 abstraction layers now.” This created the bloated enterprise codebases that gave patterns a bad name.

But the patterns themselves aren’t the problem - misapplying them is.

When you actually have:

- Multiple LLM providers to swap between → Factory makes sense

- Multiple algorithms to A/B test → Strategy makes sense

- Shared agent lifecycle that varies by implementation → Template Method makes sense

- Jobs that need retry/persistence → Command makes sense

The difference: I am using patterns to solve actual problems, not speculative ones.

GyanAgent uses 11/23 GoF patterns - not because I love patterns, but because I hit 11 specific problems that patterns solve. The other 12? I don’t use them because we don’t have those problems yet.

No Singleton (global state is problematic in distributed systems).

No Builder (Factory Method is sufficient for our construction needs).

No Visitor (we’re not traversing complex object structures).

This is pattern-driven by necessity, not dogma.

Framework vs. Foundation: A False Dichotomy

Here’s what I’m not saying:

✗ “Don’t use LangChain/AutoGen/CrewAI”

✗ “Reinvent everything from scratch”

✗ “Design patterns solve AI-specific problems”

Here’s what I am saying:

✓ Frameworks are plumbing; patterns are architecture

LangChain gives you prompt templating. The Strategy pattern helps you swap algorithms without breaking tests.

✓ Patterns scale when frameworks hit limits

AutoGen’s AssistantAgent works great for demos. The Command pattern + job queue handles thousands of retries/day with exponential backoff.

✓ Foundation-first prevents framework lock-in

When you abstract provider interfaces (Factory), agent lifecycles (Template Method), and data stores (Facade), swapping frameworks becomes tractable. I evaluated 3 different orchestration approaches. The core agents didn’t change.

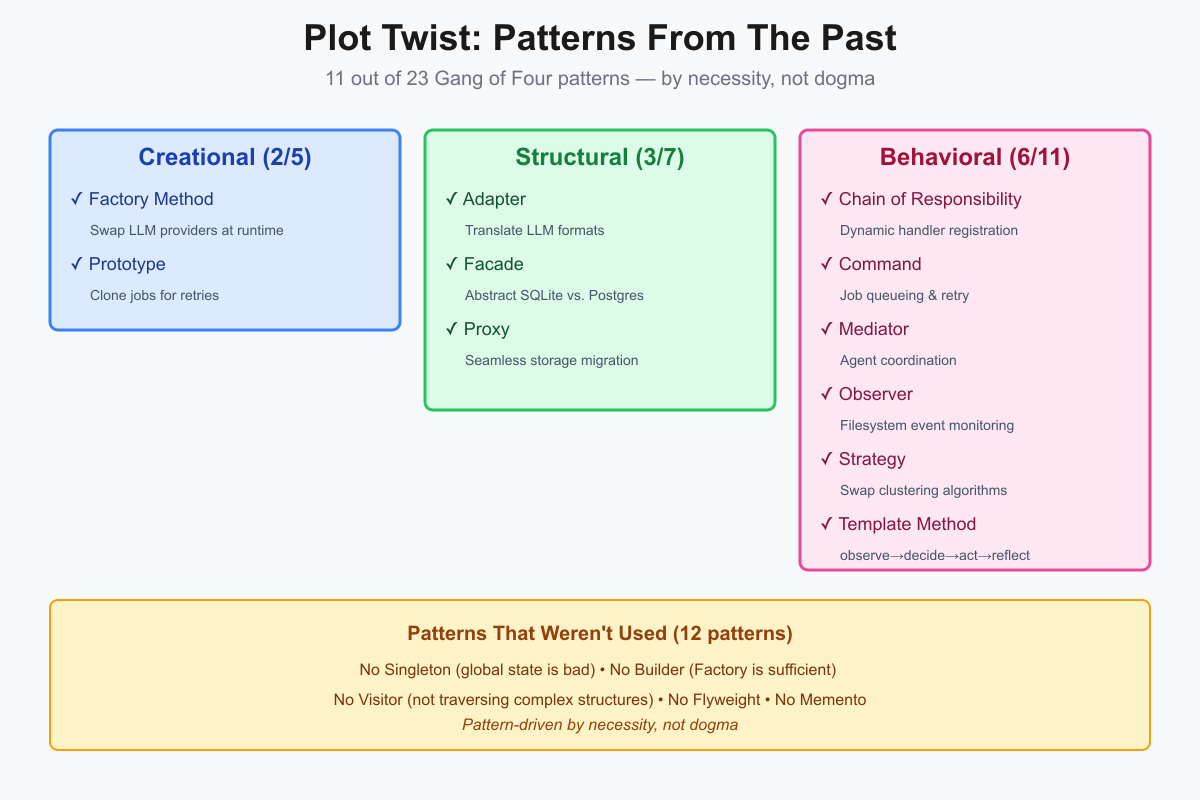

Plot Twist: Patterns From The Past

After iteratively building GyanAgent, I audited the codebase to see which patterns emerged organically. As mentioned above, I didn’t plan these upfront - I implemented them when I hit specific pain points that needed solving.

Here’s what I found:

Creational (2/5)

- Factory Method - Swap LLM providers, ASR engines, downloaders at runtime

- Prototype - Clone jobs for retries without losing identity

Structural (3/7)

- Adapter - Translate preference signals across heterogeneous LLM prompt formats

- Facade - Abstract SQLite vs. Postgres so agents remain backend-agnostic

- Proxy - Delegate storage operations with `__getattr__` for seamless migration

Behavioral (6/11)

- Chain of Responsibility - RSS/Gmail special handlers register dynamically

- Command - Encapsulate jobs as objects for queueing, retry, scheduling

- Mediator - Maintenance dispatcher coordinates agents without coupling

- Observer - Filesystem watcher emits events for inbox monitoring

- Strategy - Swap clustering algorithms (HDBSCAN/agglomerative/fallback)

- Template Method - Maintenance agents inherit observe→decide→act→reflect

11 out of 23 Gang of Four patterns.

Not because I am pattern zealots. Because I hit 11 problems that have been solved for 30 years.

The other 12 GoF patterns? I don’t use them - because I don’t have those problems. This is what pragmatic pattern usage looks like: use them when you need them, not because they exist.

I didn’t use patterns for the sake of patterns. These 11 emerged organically when solving real problems:

- “How do I swap providers without breaking agents?” → Factory

- “How do I retry jobs without duplicating state?” → Prototype

- “How do I add new handlers without modifying pipelines?” → Chain of Responsibility

- “How do I test clustering without running full pipeline?” → Strategy

Each pattern solved a specific pain point. Each made the system more maintainable.

Why This Matters for Agentic Systems

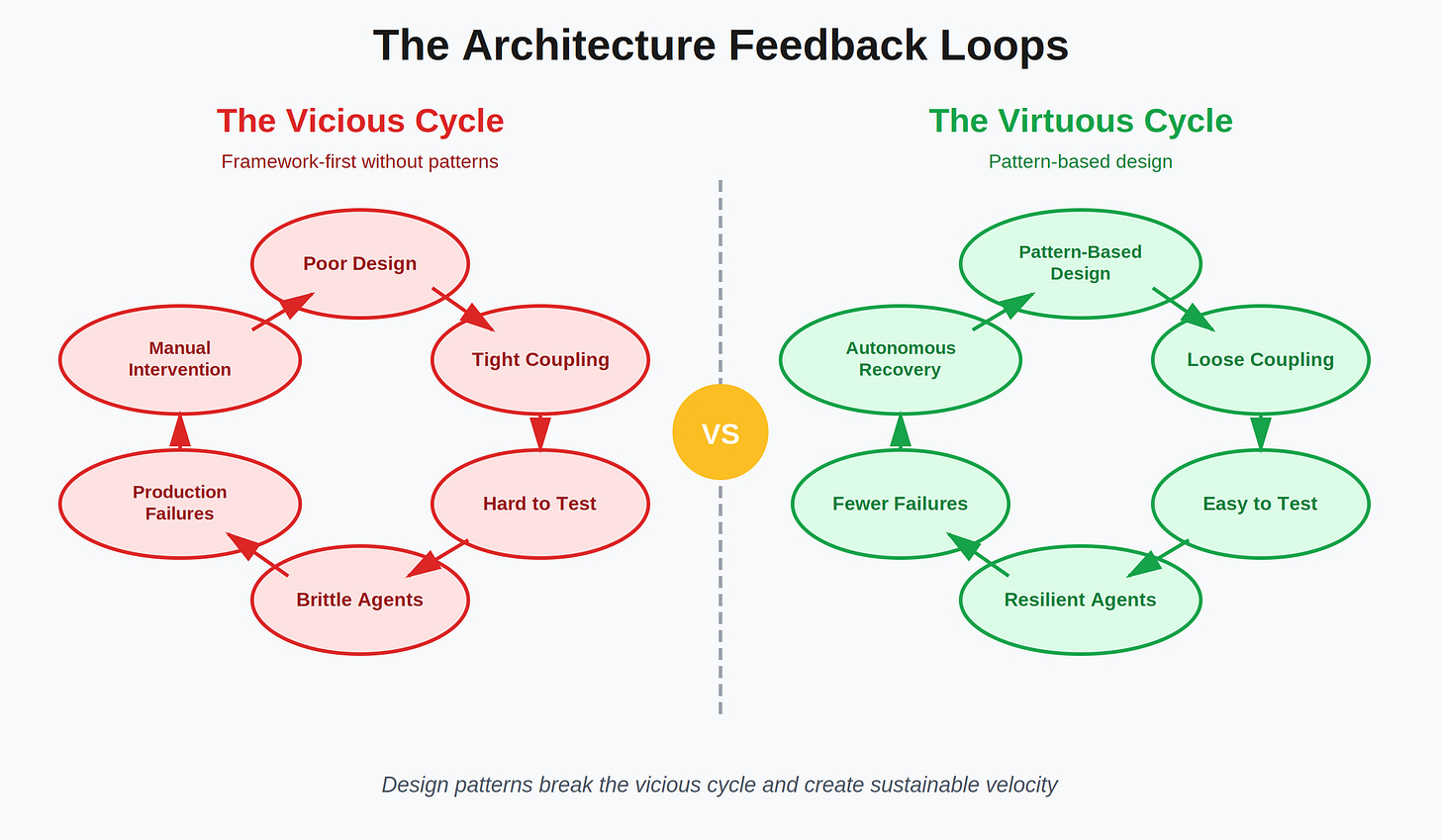

AI agents amplify architectural debt.

A poorly designed web API might serve slow responses. A poorly designed agent system might:

- Burn through $500 in API costs retrying the same failure

- Lose 3 hours of processing when a single agent crashes

- Require 2-week rewrites to switch from OpenAI to Claude

- Make testing impossible because everything is tightly coupled

The feedback loop is brutal:

… and design patterns break this cycle:

The Question That Separates AI Engineers from Prompt Engineers

Are you building software systems that happen to use AI? Or are you gluing AI APIs together and hoping it scales?

The difference shows up when:

- OpenAI changes their API → System adapts in hours vs. emergency 2-week rewrite

- You need to test without burning credits → Mockable interfaces vs. hardcoded API calls

- You want to add agent #8→ Takes 3 days vs. takes 3 weeks

- You need to swap algorithms → Config change vs. code change across 5 files

- Rate limits hit → Automatic retry with backoff vs. manual intervention

If you can’t answer “system adapts” to all five scenarios, you don’t have architecture -you have duct tape.

Conclusion: Build Your Software On Good Design

The best agentic systems I’ve seen share one trait: they’re **well-designed software systems that happen to use AI**, not AI projects with software tacked on.

GyanAgent processes thousands of knowledge documents, generates distilled daily insights, runs 8 autonomous agents on a schedule, and syncs to production with 99.6% bandwidth efficiency. The AI is impressive. The architecture is what makes it maintainable.

When I refactored clustering to use cosine distance instead of Euclidean, orphaned documents dropped from 52 to 5 (90% improvement). Zero lines of agent code changed. The Strategy pattern isolated the algorithm. The Template Method preserved the lifecycle. The Facade kept storage consistent.

That’s the power of design patterns in Agentic AI: change propagation is bounded.

So before you > pip install langchain and start building agents, ask yourself:

- Can I swap LLM providers in one line?

- Can I test my agents without API calls?

- Can I add a new content source without touching existing code?

- Can I retry failures without duplicating state management?

- Can I migrate storage backends without rewriting agents?

If the answer is “no,” you don’t have an architecture problem. You have a design patterns problem.