Constraint vs. Capability: What Claude and ChatGPT's Architectures Reveal About AI Platform Strategy

Here's something I've noticed after months of heavy use: Claude handles file operations with surprising reliability. ChatGPT... doesn't. Not consistently, anyway.

I'm not talking about edge cases. I mean simple requests - "export this as markdown," "give me a downloadable CSV." In ChatGPT, these fail often enough that I've built workarounds. In Claude, I rarely think about it.

I wanted to understand why. The answer - as far as i could tell - turns out to be architectural, not incidental - and it reveals something important about how AI platforms should be designed.

Two Theories of Platform Design

When Anthropic launched Artifacts in mid-2024 and OpenAI scaled Code Interpreter, each made a distinct architectural bet:

Anthropic's thesis: Constrain capability to maximize reliability. Remove entire problem domains rather than solving them imperfectly.

OpenAI's thesis: Maximize capability surface area. Give users power and flexibility, even if it introduces failure modes.

By early 2026, both platforms have evolved and partially converged. But the original design philosophies still explain why their failure modes differ so sharply: Artifacts typically fails visibly (rendering issues you can see) while Code Interpreter can fail silently (ephemeral data loss, expired download links).

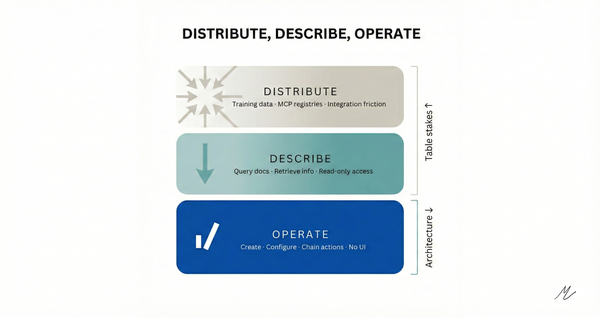

Anthropic: Constraint as Strategy

Artifacts was built by a small team in roughly three months. Product designer Michael Wang characterized the approach: "A huge chunk of Artifacts is 'just' presentational UI. The heavy lifting is happening in the model itself."

This wasn't accidental minimalism - it was a design philosophy.

Claude's Artifacts run as React components inside sandboxed iframes. The model produces structured content; rendering happens client-side. Anthropic leans on browser isolation rather than giving each chat a long-lived server container or user-visible filesystem—keeping the platform layer thin, even though Artifacts still depend on Anthropic's infrastructure behind the scenes.

By keeping the platform layer thin, Anthropic:

- Reduced the surface area for bugs

- Avoided complex server-side execution infrastructure

- Made reliability a function of browser rendering (mature, well-understood) rather than container orchestration (complex, failure-prone)

The tradeoff is real: you can't run arbitrary Python, process large datasets server-side, or generate binary files directly. But within those constraints, it works reliably.

Anthropic's engineering blog describes Claude Code as "intentionally low-level and unopinionated." The same philosophy runs through Artifacts: the model does what models do well (generate structured output), the platform does what platforms do well (render, persist, serve). Neither pretends to be the other.

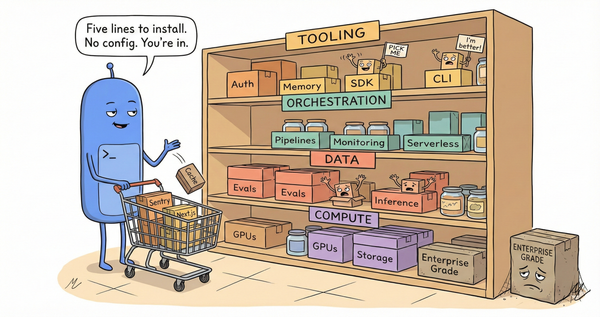

OpenAI: Capability as Strategy

ChatGPT isn't trying to be a document editor. It's trying to be everything.

Code Interpreter runs Python in sandboxed server-side containers. That's genuinely powerful - you can upload CSVs, generate charts, process images, run computations impossible in a browser.

But it brings distributed systems complexity into what looks like a chat interface.

The critical detail: containers expire after 20 minutes of inactivity. Files written to /mnt/data/ are ephemeral. Download links are pointers to temporary storage that can vanish before you click them.

Users expect chat to be stateless - send a message, get a response, done. Code Interpreter violates that expectation by introducing hidden state, hidden lifetimes, and hidden failure modes.

Sam Altman articulated OpenAI's approach in his January 2025 "Reflections" post: "We continue to believe that the best way to make an AI system safe is by iteratively and gradually releasing it into the world, giving society time to adapt and co-evolve with the technology." Ship fast, learn from usage, fix issues as they emerge.

The file download failures are, in this frame, acceptable costs of iteration.

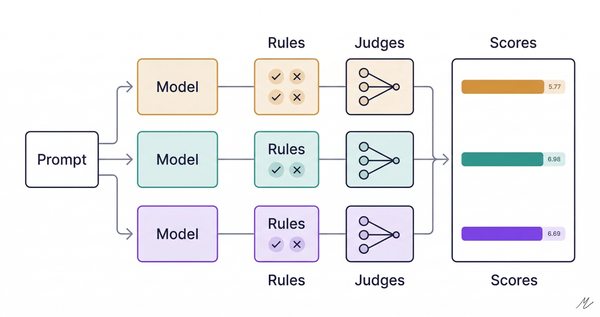

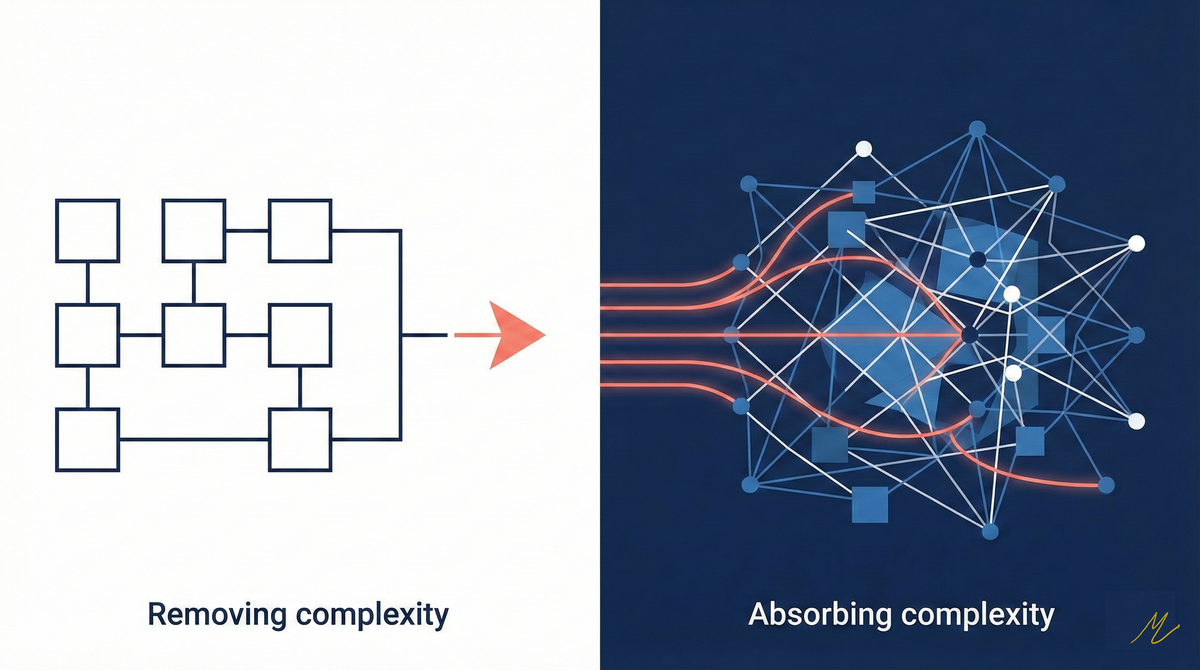

Absorbing vs. Removing Complexity

This is the core strategic difference:

OpenAI absorbs complexity into the platform. Want to run code? Here's a container. Want files? Here's a filesystem. Want to analyze data? Upload it.

Anthropic removes complexity from the platform. Want content? Here's an artifact. Persistence? That's a UI concern. Execution? That's your browser.

Absorption scales capability. Removal scales reliability.

The Convergence (2025–2026)

Neither platform stayed static.

Anthropic expanded Artifacts with API access (`window.claude.complete()`), app hosting, and sharing capabilities. They're adding power - but incrementally, with explicit guardrails at each step. OpenAI added safety frameworks, red-team evaluations, and shifted toward reasoning and reliability with their o-series models. The "ship fast, fix later" approach is maturing. Both are converging toward a middle ground: capability with guardrails, reliability with power.

But the original architectures create path dependencies. The failure modes they built in persist even as capabilities converge.

The Deeper Lesson

Claude's reliability comes from architectural humility - refusing to solve problems that introduce brittleness, and instead designing them out of the system entirely.

ChatGPT's power comes from architectural ambition - absorbing complexity so users can do more, even if that "more" sometimes fails. Both approaches have merit. Both have costs. The question isn't which is "right" — it's which foundation you want to build on. Starting with constraint and adding capability is a different journey than starting with capability and adding reliability. The former is harder to start but easier to extend.

Understanding where each platform came from helps you choose the tradeoffs that align with what your users actually need.